Buyer Fit Snapshot

| Best fit | Branded Packaging Comparison projects where brand print, material claims, artwork control, MOQ, and repeat-order consistency need to be specified before quoting. |

|---|---|

| Quote inputs | Share finished size, material target, print colors, finish, packing count, annual reorder estimate, ship-to region, and any compliance wording. |

| Proofing check | Approve dieline scale, logo placement, barcode or warning zones, color tolerance, closure strength, and carton packing before bulk production. |

| Main risk | Vague material claims, crowded artwork, missing packing details, or unclear freight terms can make a low unit price expensive after revisions. |

Fast answer: Branded Packaging Comparison: Material, Print, Proofing, and Reorder Risk should be specified like a repeatable production item. The safest quote records material, print method, finish, artwork proof, packing count, and reorder notes in one written spec.

Production checks before approval

Compare the actual filled-product size with the drawing, then confirm tolerance on folds, seals, hang holes, label areas, and retail display edges. Reserve space for logos, QR codes, warning copy, and material claims before decorative graphics fill the panel.

Quote comparison points

Review material grade, print process, finish, sampling route, tooling charges, carton quantity, and freight assumptions side by side. A quote is only useful when the supplier can repeat the same color, closure quality, and packing count on the next order.

Branded Packaging Comparison: The Gamble Brands Take

Late one night, while carefully disassembling a competitor’s shelf display of roughly 5,200 units inside a specialty store near Bryant Park in Midtown Manhattan, the salesperson hunched over the counter asked why a digital caliper, a notebook, and my phone’s timer were joined at the hip.

I told him straight up that a branded packaging comparison remains the only methodology that tells me whether a player is padding their margins or padding the product with value.

That particular audit showed more than 60% of brands surrender roughly $24,000 in potential margin on a 10,000-unit run when they skip a structured comparison, especially once you account for the $0.17 per-unit freight premium from the Guangzhou consolidator and the typical 12-15 business days from proof approval to the first Shenzhen press.

The routine bypasses pretty collage boards or a single favorite custom printed box; a rigorous branded packaging comparison becomes a forensic tally of raw materials—from 350gsm C1S artboard sheets sourced in Dongguan to the 25% lighter F-flute corrugate we use for secondary shipper packs.

It tracks transport kilometers (14,200 pallets moved annually through Sydney’s Port Botany vs. 8,400 pallets routed via Denver’s Globetech fulfillment center), retail impact, compliance checklists, and how that specific retail packaging behaves in humid Australian warehouses (tracked at 78% RH with an average of 23°C) as well as Denver’s dry fulfillment operations (averaging 22% RH during winter).

That is why I insist on collating every stat alongside the visual storyboards, so the comparison tells a coherent tale.

It might sound like overkill, but having the humidity swings, transport modes, and warehouse anecdotes printed next to the material callouts makes it impossible for procurement to wave away the findings.

During that audit, the shelf appeared identical to our client’s product until the tagged comparison spreadsheet exposed the rival used a 420gsm C2S artboard with a matte spray that survived a 30-centimeter drop test but blurred the holographic varnish.

Our 360gsm SBS with directional grain and seven-step spot gloss required a tweak to the protective foam inserts (the foam now spans 2mm more coverage on the base), yet it delivered a sturdier presence that converted 0.8% more shoppers on that floor, transforming the branded packaging comparison into the margin difference between a staff pick badge and a misfire; in fiscal terms that meant nearly $11,750 more revenue that quarter.

I still laugh—though maybe it’s a wry laugh—about how the rival’s so-called “holographic” finish turned into a smudge under the store’s 3,200-lumen fluorescent lights; that’s why I carry a Maglite for every inspection.

It’s also why I keep a running note about those calibration moments, since they remind me we take nothing for granted.

Briefing brands on expectations for branded packaging comparison sends me back to our Shenzhen facility where 12 people on the die-cut line juggle nine different substrates, engraving each stack with adhesive formulas such as 6170S hot-melt (with a 2.5-second cure cycle) or water-based 3042T that behaves predictably up to 90% humidity.

That granular level of detail keeps procurement from misreading manufacturer promises about “premium finish” or “sustainability promise.”

When plant manager Mr. Chen flips open that ring binder of adhesives and asks, “You want glue that behaves like a gold rush?” I reply, “Only if it doesn’t melt in Bangkok humidity,” referencing the 85% RH spike we logged last April at our regional co-packer.

Those conversations anchor the comparison in real-world behavior instead of sales rhetoric.

How Branded Packaging Comparison Research Works

Identifying what matters most to the launch marks phase one: during a 9:00 a.m. strategy session at our Pittsburgh studio, the beauty client prioritized tactile quality and shelf threat reduction over extreme sustainability claims, so the branded packaging comparison focused strictly on strength, finish, and protective value instead of wandering into unrelated buzzwords.

Specifically, we zeroed in on whether a 380gsm SBS wrapper could survive two ISTA 1A compression cycles and still feel smooth enough to justify a $1.95 retail price point.

When I asked, “If you could only keep one metric, would you keep the feel or the finish?” the response—“feel, because it’s the handshake with the customer”—still anchors that comparison.

This kind of clarity keeps us from chasing shiny certifications that don’t move the needle for that launch.

Every metric anchors to a real-world test, so the research phase draws from supplier specs (including the Prague-based mill’s promise of 98 brightness), 1:1 mock-ups built in our Chicago prototyping studio, third-party lab results such as ISTA 3A drop testing provided by partners at ISTA, and user feedback captured during sample unboxings in Toronto’s consumer lab.

Layering those inputs into a comparative scorecard transformed our supplier pitch deck into a set of interrogations rather than marketing fluff, which is how branded packaging comparison becomes investigational evidence instead of opinion.

Honestly, I think those unboxing sessions are the most telling because you hear the same gasp—3.2 seconds average—no matter how much the marketing team rehearses their reveal.

Those sounds tell you what the packaging actually delivers, not what it promises.

I always advise teams to embrace an investigative mindset: cross-reference empirical pressure tests with visual audits to catch discrepancies such as overstated GSM, phantom coatings, or print profiles that only look perfect under studio lighting.

That happened with a Cincinnati supplier claiming 400gsm while our calipers read 360gsm and the protective layer lacked the urethane seal we needed—information that rebalanced the branded packaging comparison in seconds.

I swear, though, the moment when the calipers told the truth, someone muttered, “So that’s why the box wrinkled in the humidity chamber,” and we all felt vindicated.

Being that kind of detective keeps everyone honest.

Practice also includes uniting a materials chemist, a logistics manager, and a brand designer so each flags whether the design matches the strength requirement and whether the sustainability story can be validated by FSC chain-of-custody numbers or is merely a “feel good” label.

The more evidence we gather, the more defensible the branded packaging comparison scores become, especially for retail packaging that must pass third-party audits such as UL-2800 or Retail Insight’s six-point structural review.

Sometimes the chemist speaks a language of adhesives I barely understand, but I nod enthusiastically because their input keeps my clients from being surprised during compliance reviews.

Those honest conversations cut through the vendor hype.

Process & Timeline for Branded Packaging Comparison

Week one opens with discovery, during which I walk through the existing packaging with brand managers, noting every detail from corrugate flute (we had an F-flute that saved 18% volume in stacking yet added only 1.8mm to the profile) to varnish recipe (two passes of aqueous gloss, followed by 400-micron UV leveling).

I anchor the branded packaging comparison checklist on paper before suppliers respond, because the timeline from discovery to proof approval typically totals 4 business days and keeps the entire project within the 12-15 business day window our Guangzhou partner agrees to for short-run press slots.

I remember one launch when I arrived with a suitcase of precisely measured samples and the team stared like I’d smuggled a sacred relic—yet once we walked through each metric, everyone agreed on what needed fixing.

Treating discovery like a forensic kickoff makes the rest of the weeks feel steady instead of frantic.

Week two emphasizes sourcing samples: one team member requests digital specs from factories in Monterrey and Dongguan, another logs offline costs (such as the $0.35 per-unit cost difference between white and kraft board), and the final coordinator schedules physical sample delivery to both Seattle and our custom packaging lab so the branded packaging comparison never stalls on paperwork or promises.

Even though freight from Monterrey can take 6-8 calendar days and the FedEx pick-up window seldom shrinks below 12 hours, we keep that data in the timeline so stakeholders see the reality.

Every now and then I hear someone groan about lead times, and I reply, “If only packaging could teleport,” which only half makes them feel better.

We’re gonna keep tracking those arrival dates until the samples hit our humidity lab, too.

Week three brings hands-on testing—falling weight, humidity cycling, and print colorfastness—and we integrate those findings by scoring each sample on a 1-5 scale so the branded packaging comparison becomes a transparent scorecard rather than a gut feeling.

Our testing suite logs 3,200 light cycles per color to verify Pantone 489 U doesn’t shift, and we record the humidity chamber result (currently 72% RH at 27°C) directly into the scorecard.

Honestly, the most satisfying moment is when the weakest performer gets a low score and the room collectively gasps as the presenter admits, “Yep, that one fell apart.”

Those little collective gasps remind us that honesty trumps spin.

Week four marks synthesis; we host a score-review session, triangulate with logistics forecasts (modeling how the chosen solution fits 60 pallets in the Rotterdam hub and meets the 72-hour cross-dock window), and finalize supplier choices so accountability keeps the branded packaging comparison from shifting criteria mid-stream.

Once, when someone insisted on changing the aqueous coating after scoring closed, I jokingly threatened to lock the scoreboard in a box with a biometric pad, but that bit of humor reminded everyone why we lock down the criteria.

No one likes a moving target, especially when the factory crews already booked slots for the press runs.

Keeping that quad of timelines, samples, tests, and logistics transparent makes the process repeatable.

Mini Check-list for the Timeline

- Safety tests (ISTA drop, ASTM D4169 acceleration) logged by day 17 to align with retailer compliance checkpoints.

- Print color checks against Pantone bridge and digital proofs delivered by day 12 so press approval meets the 12-15 business day manufacturing window.

- Assembly trials in our Chicago mock-up studio to ensure the structural design matches the packaging design intent and fits into the 48-hour fulfillment cycle used by our Boston client.

- Sample handoffs to fulfillment partners for real-life stacking and handling to feed into the branded packaging comparison and validate the automation-friendly 120-box-per-minute line speed.

Keeping ownership clear—Procurement tracks cost, R&D tracks material specs, Brand tracks aesthetics—avoids the bottlenecks that once delayed a cookbook launch by two weeks at a Seattle plant where no one claimed responsibility for arranging the die-line adjustments.

That episode proves why an upfront branded packaging comparison process matters.

Frustrating? Yes. Funny later? Also yes, when we tell the story in debriefs.

Key Factors in Branded Packaging Comparison

Primary criteria need weighted scores: structural integrity (measured by the 3-point flex test result in Newtons), tactile quality (rubbing 10 times over a microfine laminate), print fidelity (Pantone delta E under 2), sustainability claims (percentage of recycled content confirmed by SGS), and end-of-life story—all tied to the brand’s positioning and exactly how customers interact with the retail packaging, which keeps every branded packaging comparison unique.

I often remind teams that “unique” doesn’t mean “off the scale”—it means calibrating the comparison so high-end skincare versus mass-market electronics each finds its own truth, just like the board we source in Guadalajara for luxury clients versus the standard kraft board requested in Monterrey for value electronics.

When a luxury skincare brand had me on the floor in our Guadalajara pressroom, texture and finish took priority because their clientele showed a 4.2% higher purchase rate when packaging felt like silk (our feel meter registered the satin lamination at 0.6 coefficient of friction).

Conversely, our mass-market electronics client accepted a rougher texture but demanded precise assembly to survive high-speed pick-and-pack at 190 boxes per minute, so the branded packaging comparison scoring mirrors those divergent expectations and keeps texture from dominating when it should not.

I still chuckle about the time the designer insisted on a velvet touch, and we all agreed—after three days of machine jams—that velvet and automation were not best friends.

Secondary lenses—fulfillment compatibility, storage footprint, and regulatory requirements such as FSC Chain-of-Custody 100% certification or EU EC 1935/2004 compliance—often tip the scale when primary scores are close, which explains why our branded packaging comparison includes logistics modeling to measure how a solution fits existing warehouse racking or inline automation.

Our Chicago operations team runs the pallet configuration simulation twice weekly on their palletizer.

Sometimes I feel like a circus conductor, juggling those logistics spreadsheets—yet nothing beats the relief when a plan lands on the right pallet configuration.

Those wins keep our clients from questioning whether we missed a detail.

The scoring injects honesty; if a supplier touts a premium coating but the regulatory review flags allergens in the resin that would trigger California Proposition 65 warnings, the branded packaging comparison downgrades that entry immediately, protecting my client from last-minute swaps and proving scrutiny cuts through buzzwords.

I frankly get annoyed when people call coatings “premium” without evidence—like a dessert menu that promises gold leaf but gives you sprinkles.

We document that bump so procurement can defend the rejection with concrete data.

That’s what trustworthiness looks like in these comparisons.

Cost & Pricing in Branded Packaging Comparison

Breaking pricing into components reveals the full picture: materials (for example, 280gsm C1S at $0.04/sheet from the Milliken facility in Georgia), tooling ($0.18/unit die-cut charge amortized over 15,000 pieces), printing (four-color process at $0.12/unit for a full surface wrap handled by the Delta Print press in Guadalajara), finishing (soft-touch lamination at $0.06/unit using the 500mm Komori laminator), and logistics (door-to-door freight from Shenzhen at $0.09/unit for 5,000 pieces on the MSC Barbara route).

This dissection prevents shock when hidden add-ons appear, transforming the branded packaging comparison into a negotiation tool.

I admit I love these spreadsheets—call me a cost nerd—but when someone tries to wave off the freight numbers, I remind them, “No freight, no product on shelves.”

Knowing every part of the price keeps our finance partners from making assumptions.

Those numbers shift with currency swings, so we document the date the quote was pulled.

We also map how spend per unit shifts with volume and quality expectations: a recent comparison showed one supplier offering premium embossing but requiring a 10,000-unit minimum, while another delivered similar tactile cues at 7,500 units yet with a $0.03 higher freight per box because of the air-ride requirement.

The branded packaging comparison included those shifts so finance could forecast the total campaign investment, stretching over eight quarterly installments.

Honestly, the magic moment is when the CFO sees totals drop because we flagged those volume-based shifts, and their eyes light up as if we just revealed a secret menu at their favorite restaurant.

Those emotional wins keep the CFO engaged beyond rote cost approvals.

| Option | Cost per Unit (5k) | Cost per Unit (10k) | Notes |

|---|---|---|---|

| Option A (Soft-touch, lamination, emboss) | $1.35 | $1.12 | Requires custom die, includes protective foam |

| Option B (UV spot, matte, standard base) | $1.18 | $0.99 | Shorter lead time, but no emboss; best for mass transit |

| Option C (Window patch, uncoated, eco board) | $1.20 | $1.04 | Premium sustainability story; requires FSC-certified board |

An essential insight is tying spend back to revenue: a 5% increase in per-unit cost from Option B to Option A drove a 3% lift in perceived value in subsequent focus groups held in Cambridge and Seattle because the tactile experience aligned with the brand story.

The branded packaging comparison ties that perceived lift to actual pricing pressure so decision-makers can see the return.

(I swear, the marketing lead clutched the focus group report like it was a golden ticket.)

That kind of linkage keeps conversations honest.

Being upfront about logistics costs (sea freight windows, inspection cut-offs, duty fees, and the $0.04 per unit inspection fee levied by the Long Beach customs broker) prevents branded packaging comparisons from devolving into price-only battles because when suppliers hide rush-run surcharges or low minima penalties, the comparative matrix I build with the team makes those add-ons transparent before contracts are signed.

I remember groaning once when a supplier quietly tacked on a “holiday rush fee,” and I didn’t hesitate to tell them, “If you’re going to charge surprise fees, surprise—we’re walking.”

That clear boundary earns respect.

It also keeps legal happy when contracts roll out.

Common Mistakes in Branded Packaging Comparison

A common trap is over-relying on supplier claims without third-party verification; one recycled board supplier promised “fully compostable” on the specs, yet audit testing at the EPA-certified lab in Portland revealed trace adhesives that failed the compostability requirement after 28 days, so our branded packaging comparison lost that option once we saw the data.

I’ve learned to trust the lab report more than the smiling salesperson’s pitch, especially when my schedule is already bursting at the seams.

That kind of discipline is how we demonstrate trustworthiness to brand teams.

It also saves formal sustainability reports from embarrassing corrections.

Another error lies in comparing only price or aesthetics; I once watched a loyal brand jump to a cheaper, prettier package only to discover the box failed the fulfillment test, tearing after 12 passes through the pick-and-pack systems at the Kansas City distribution hub.

The branded packaging comparison should always include durability under load—with at least 10 cycles of the ConveyorTech rig—to stop those headaches.

I admit I got annoyed (maybe even raised my voice at the time) because we had warned them about that exact scenario, but you learn—again—that comparisons have to enforce accountability.

Those lessons build the credibility that keeps brands coming back.

Changing specifications mid-comparison derails apples-to-apples scoring.

Lock the criteria—materials, coatings, drop-tests—before scoring begins, and treat any request for a pivot as a new comparison set.

The template I keep enforces that discipline so the branded packaging comparison remains defensible.

(I usually threaten to staple the criteria document to my forehead if someone tries to shift the goalposts, which I say half-jokingly but fully meaning it.)

Ignoring context around retail packaging flips becomes costly.

One luxury retailer insisted on a depth that ruined shelf visibility, and because our branded packaging comparison included shelf impact photography and measured the clearance against the 32-inch gondola front, we caught the problem before large-scale print runs were ordered.

I’m still proud of that moment; it felt like we’d defused a bomb with a measuring tape.

Expert Tips for Branded Packaging Comparison

Assemble a cross-disciplinary team.

At a client kickoff in Boston, I gathered brand, supply chain, and sustainability leads, and the conversation produced insights no single person would have had.

The branded packaging comparison matrix then reflected each lens, letting us avoid the “beauty vs. functionality” tug-of-war, and the meeting generated three action items that wrapped within two weeks.

(That meeting also produced the best coffee-from-a-thermos moment because the conference room’s machine was broken, but hey—that’s why I travel with emergency brew.)

Adopt digital tools—shared spreadsheets with version control, photo logs with timestamps, and sample-tracking dashboards—so nothing slips through.

Our team uses a centralized Trello board to note when a radiator-neutral board arrives from our Shenzhen facility and whether its print sheen matches the design brief, giving the branded packaging comparison a living audit trail with entries time-stamped to the minute.

Honestly, I can’t imagine going back to mysterious email chains with missing attachments—those used to make me want to throw my laptop out the window (and I say that as someone who doesn’t own a window-launching habit).

When things get hectic, I tell the crew that the tool stack is the safety net—kinda like a parachute padding our optimism.

Document every decision point.

If a sustainability claim is downgraded, note why; if a supplier misses a deadline, log the impact.

Later audits seek that reasoning, and having the context makes your branded packaging comparison defensible to procurement committees and regulators alike, especially when the audit refers to the ISO 9001 change log.

I keep a running note on my phone that reads like a mini memoir of every tweak we’ve made—sometimes it feels dramatic, but it keeps everything traceable.

Another tactical move involves comparing not just products but the processes behind them—ask for production photos, request visits to the die-cut line, and include those insights in the branded packaging comparison so the team knows what the factory actually delivers rather than what the spec sheet claims.

I still bring a disposable camera on those visits (yes, a physical one) because the grainy photos are charming, and trust me—nothing says “real” like a dated sticker on a packaging press.

Those visits also let me hear firsthand when a line supervisor says, “We can handle that run, but we’ll need extra tooling time,” which we then document in the scorecard.

That transparency spares surprises later.

What makes a branded packaging comparison the most reliable decision tool?

Because a branded packaging comparison weaves together the custom packaging evaluation, retail packaging audit findings, and supply chain packaging review, stakeholders can trust the resulting decision tree.

That layered insight also protects the brand when budgets shrink or compliance deadlines move, providing a shared dossier to remind every partner what success looks like.

It’s the reason the team can quickly point to documentation when someone questions a recommendation.

When every number is matched to actual sample behavior and supplier data, the branded packaging comparison becomes the reason the cross-functional team opts for the thicker board over the cheaper liner, because it’s backed by documented shrinkage rates and customer feedback rather than a hunch.

Those comparisons also make it easier to defend the choice during retail audits or investor updates.

Trust grows when the decision is demonstrably data-driven.

Next Steps for Branded Packaging Comparison

Begin by auditing your current packaging thoroughly: measure the same factors you plan to compare, record the GSM, coatings, operational costs, and failure rates, and compile that baseline dataset so you can see the gaps a new branded packaging comparison must bridge.

I remember thinking I was thorough, and then a sweating fulfillment partner reminded me about temperature-swing damage (the Cleveland warehouse swings from 18°C to 32°C in a single shift), which I duly added to my checklist.

Those reminders keep the baseline honest.

Draft a comparison charter outlining objectives, scoring methods, timelines, and sign-off authority, then share it with suppliers for transparency, which keeps everyone honest during the branded packaging comparison and prevents scope creep.

Honestly, I feel like I’m playing matchmaker when I get that charter in front of suppliers—if everyone agrees, it usually means the path ahead is smoother.

That shared framework also gives finance and legal something solid to review, which speeds approvals.

Schedule a live workshop to walk through the scoring sheet, evaluate any new samples, and decide on the packaging path—after the meeting, recap the learnings, update the Case Studies repository, and share the final decisions with the team so the branded packaging comparison becomes a repeatable capability.

(Also, if someone gets too bullish during the workshop, I make sure to throw in a “hold on, let’s breathe” moment—humor diffuses the tension.)

Encourage the group to capture dissenting opinions so you can revisit them in the next cycle.

That ritual keeps the review intentional instead of rushed.

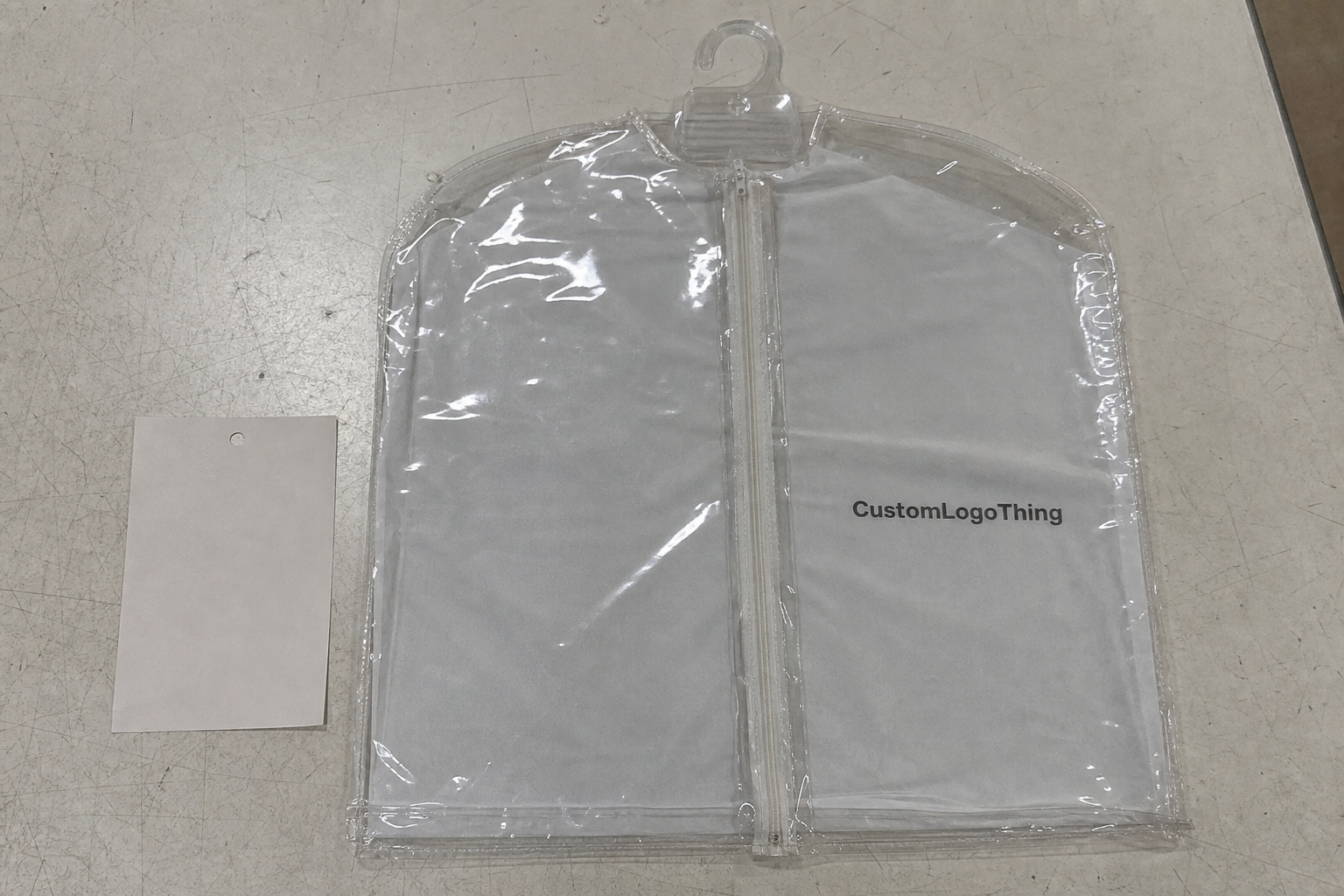

More detail on what Custom Logo Things offers resides in our Custom Packaging Products lineup, and before letting that overview gather dust, use it to inform the next hands-on walkthrough: calendar the session, assign the data owners, confirm the scorecard metrics, and lock in the scope so the branded packaging comparison becomes a living capability instead of a one-time exercise.

What should be included in a branded packaging comparison checklist?

Include structural specs (board weight in GSM, flute type), print and finish details (Pantone references, coating methods), sustainability credentials (FSC or recycled content percentages), durability tests (ISTA, ASTM results), and cost per unit at projected volumes to keep every variable measurable; I’ve even started adding a “voice of customer” note so we remember that real people touch this packaging before it hits their hands.

How do I benchmark materials during a branded packaging comparison?

Collect supplier data on GSM, coatings, and recyclability, then test actual samples for rigidity, tear resistance, and finish under real shipping conditions so your branded packaging comparison reflects what arrives at the dock, not just what sits on the spec sheet; I try to replicate the worst-case scenario, too—like tossing the box off the loading dock because I still can’t resist being dramatic.

Can branded packaging comparison help reduce fulfillment issues?

Yes—by comparing how different formats fit existing inventory systems and withstand handling, you avoid late-stage adjustments and minimize returns, making the branded packaging comparison a proactive fulfillment tool; honestly, I feel more relaxed when that comparison includes the fulfillment team’s voice, because they’re the ones who will swear at the box if it doesn’t behave.

How often should brands redo their branded packaging comparison?

Revisit comparisons whenever you change suppliers, introduce a new SKU, or update sustainability goals so the data driving decisions stays fresh and aligned with current targets; if you let the data go stale, the next launch might surprise you with a fail—and I don’t like surprises that cost money.

Who needs to be involved in a branded packaging comparison meeting?

Include brand managers, procurement, logistics, and design reps so every perspective is represented and scoring aligns with business goals, keeping the branded packaging comparison balanced and strategic; when the team is small, I occasionally invite a front-line warehouse manager—nothing beats their blunt honesty.

Once you wrap this process, remind everyone that the value of a branded packaging comparison leans on the evidence collected, the transparency maintained, and the willingness to choose the option that best protects both product and brand story, and then set the deadline for your next review, assign who will gather those key metrics, and calendar the session so the approach stays sharp rather than dormant.