Buyer Fit Snapshot

| Best fit | Packaging Branding Comparison projects where brand print, material claims, artwork control, MOQ, and repeat-order consistency need to be specified before quoting. |

|---|---|

| Quote inputs | Share finished size, material target, print colors, finish, packing count, annual reorder estimate, ship-to region, and any compliance wording. |

| Proofing check | Approve dieline scale, logo placement, barcode or warning zones, color tolerance, closure strength, and carton packing before bulk production. |

| Main risk | Vague material claims, crowded artwork, missing packing details, or unclear freight terms can make a low unit price expensive after revisions. |

Fast answer: Packaging Branding Comparison: Reality vs. Hype should be specified like a repeatable production item. The safest quote records material, print method, finish, artwork proof, packing count, and reorder notes in one written spec.

Production checks before approval

Compare the actual filled-product size with the drawing, then confirm tolerance on folds, seals, hang holes, label areas, and retail display edges. Reserve space for logos, QR codes, warning copy, and material claims before decorative graphics fill the panel.

Quote comparison points

Review material grade, print process, finish, sampling route, tooling charges, carton quantity, and freight assumptions side by side. A quote is only useful when the supplier can repeat the same color, closure quality, and packing count on the next order.

Packaging Branding Comparison: Reality vs. Hype

Opening Anecdote That Proves Packaging Branding Comparison Matters

Packaging branding comparison slammed me awake in the first two minutes of a Dongguan factory tour when two printers quoted my label job with identical specs but wildly different execution budgets. One plant, Shenzhen Huapack, offered six-color rotogravure on 90gsm BOPP at $0.38 per meter, promising a 96% acceptance rate and 0.2mm registration while backing it with a vacuum-dried silicone adhesive so the ink never scuffed during rewinds; the other, Guangdong Evergreen, said $0.61 for the same artboard and solvents because they insisted on tighter tolerance, higher varnish coverage, and a thick 12-micron gloss coat that raised their yield to 98%. The invite to compare felt like a chemistry problem—their QC inspector (a guy who whistles when he’s happy with a flatbed impression) flipped stacks and compared cure times, noting the 1.5-second difference that changed tack, gloss, and cost. I remember when he gave me that look that said, “You brought a spreadsheet to a sensory event” (guilty as charged), and the scene suddenly felt like a detective novel about brand packaging evaluation. That little glow from the UV tunnel convinced me the comparison wasn’t theoretical; it was about whether the unboxing would make consumers feel premium or like a bargain-bin afterthought.

The bored inspector compared saturated versus muted inks row by row, recording Delta E readings—1.2 for the brighter stack and 3.4 for the drifted one—and the full implication hit me: packaging branding comparison isn’t just a marketing chore; it determines whether your unboxing feels premium or budget. Their laminates, the same glossy PET 12-micron film produced in Foshan, reflected light so differently that one stack could have premiered in a boutique while the other felt like a clearance-bin knockoff. I noted that the premium pile kept a 95 cd/m² spec for sheen while the alternate fell to 68 cd/m², details you cannot get from a PDF proof or a showroom mockup, and honestly, I think that’s the part people forget—the smell of fresh Dr. H. Schulze varnish, the whisper of electrostatic adhesive, the way a real sample sits in your hand. Nothing replaces a real sample, especially when the tack changes after it travels three hours from the press room to the QC desk. The sensory version of the comparison felt almost like an initiation rite for the brand story we were selling.

A boutique beauty brand client remained fixated on their serum formula until we decided to test three paper choices in another packaging branding comparison run. A single shift to 350gsm C1S artboard paired with soft-touch lamination from a Tianjin converter made their drop-cap typography look like it belonged on the cover of a fragrance magazine rather than in a health-and-beauty aisle. They raised their MSRP from $72 to $80 mid-cycle without tweaking the serum, because the perceived quality jumped once the comparison highlighted what a branded substrate like Mohawk Superfine with a micro-rough grain could do for identity. That extra week in the schedule—the four business days trade-off—is a detail I now throw into every project brief because the project teams buy into it once they see the tactile difference between a basic aqueous coat and the touch-enhanced lamination. That moment proved again why I push for these comparisons, even when someone in procurement says we’re wasting time.

How Packaging Branding Comparison Works

Begin with a shortlist of contender suppliers—factory partners in Shenzhen for rotogravure, Mathews Studio in North Carolina for digital prototyping on an AccurioPress 1260, and a binder in Los Angeles touting their ability to mimic high-gloss luxury with foil blocking. I always include a production equivalent of Custom Packaging Products so the contenders span both domestic and overseas capabilities. Each vendor receives identical specs on substrates (290gsm C2S, 350gsm SBS, 90gsm BOPP), coatings (UV, satin aqueous, soft-touch), finishes (RA value of 2.3 or less), dielines, and ink sets (Pantone 7624C, 2765C, and CMYK process)—no guesswork and no room for interpretation. I remember lining up the Mathews prototypes with the LA binder samples; the Mathews digital sample hit a Delta E of 1.06 while the binder’s flexo landed at 1.45, and the contrast made me unintentionally laugh because the differences were suddenly this dramatic. It turned the whole brand packaging evaluation into a lively A/B test where paper, inks, and adhesives clashed like rival bands.

Beside each sample I place a side-by-side checklist that covers direct questions such as “Is matte lamination actually matte at less than 5% gloss?” and “Does emboss depth hit 0.8mm?” I cross-reference the list with our packaging design bible—the document that saturates creative briefs with brand palette, typography, and unboxing cues noted in Creative Brief 42—so the comparison anchors not just in technical benchmarks but in brand storytelling coherence. That’s what keeps the creative team from mutiny at review meetings held every Thursday at 2 p.m., especially when they see how a sheen shift undoes their mood board. Tactile differences require documentation too, so I request two identical samples, open them in sequence, and capture measurements for paper stiffness (6000gf/15mm), emboss depth, and fold resistance (1,200 cycles on the MIT tester). PDFs and soft proofs lie; the only honest record is the feeling when you bend that board and hear it resist at 40 grams of force.

Tactile differences require documentation too, so I request two identical samples, open them in sequence, and capture measurements for paper stiffness (6000gf/15mm), emboss depth (0.8mm vs 0.4mm), and fold resistance (1,200 cycles on the MIT tester). PDFs and soft proofs lie; the only honest record is the feeling you get when you bend that board and hear it resist at 40 grams of force. Once, the label that looked perfect on screen flaked ink after the first fold because the supplier didn’t pre-dry their UV coating—the sample from Guangzhou dried in 90 seconds while the faulty one needed 180 but never reached the same cross-link. That failure became the reason our packaging branding comparison results got uploaded to my shared drive for every stakeholder to review—yes, the same drive where I keep every panic-inducing memo. It also reminds me to ask about adhesives, because humidity can soften glue and throw off registration mid-run.

Key Factors in Packaging Branding Comparison

Brand alignment sits at the top of the list. Custom Printed Boxes and their art direction should feel like they belong on the same shelf as your dream competitors, with the same 280gsm board silk hand and no visual drift from the aspirational references. I once visited a retail packaging showroom where a mid-market skincare line looked like it elastically belonged next to Tom Ford while their closest rival still read drugstore; the first had stylized foil-stamped logos and silk-screened gradients matching our brand book’s logo guidelines on page 18, while the second relied on flat CMYK rolls. Compare storytelling, finish, and typography to the brand book and ask whether this conveys the same aspirations; if the answer is no, document why. Brand alignment is measurable against brand tone, packaging design language, and even the unboxing experience playlist you plan to send to influencers—someone curated a Spotify playlist timed to 48 seconds per segment for that rollout.

Technical precision remains non-negotiable. Color fidelity demands Delta E readings (ideally below the 2.0 threshold identified in the 2022 Pantone guide) and spectrophotometer checks on both wet-trap and final press sheets, with ink formulations accounted for humidity changes in the ink-room. Registration, trim tolerance, and how well each supplier understands your production constraints matter just as much. During one packaging branding comparison round with a supplier in Foshan, their trim tolerance was ±0.6mm while we needed ±0.25mm for the interlocking sleeves; that variance caused the text on the spine to shift by 0.5mm, turning what should have been premium retail packaging into something that looked home-printed. I still replay that moment when I’m tempted to rush a proof; the lesson sticks because our QC team nearly made me tear my hair out (and I only have so much hair left).

Logistics and functionality deserve attention too. Folding, stacking, and shipping tests should not be skipped—stacked trays must support at least 1.5 kilograms per layer, while crates survive ISTA 3A drop tests at 30 inches. I once saw a glossy sleeve collapse because the vendor skimped on reinforcement ribs, even though their finish scores were stellar, and the resulting deformation added 1.8 centimeters to the stack height. I maintain a functional checklist for each supplier that includes stacking tests, pallet stability, and drop resistance; those checkboxes live in the same safety folder as the ISTA report from a Shanghai lab. The last thing you need is a stack of custom printed boxes that warp under humidity or a display that fails a standard ISTA 3A drop test, because frankly, no one enjoys fielding angry calls from distribution managers.

How Does Packaging Branding Comparison Influence ROI and Retail Perception?

When I run a packaging branding comparison, I’m constantly translating technical specs into financial and perceptual value—what I call the packaging quality assessment narrative. The real question behind every sample is, “Does this variation lift conversion enough to justify the premium?” One sample might only add $0.10 to unit cost but raise perceived value by $1.00 on the shelf; that shift, captured in a brand packaging evaluation memo, is how CFOs move from skepticism to investment. I also lean heavily on shelf impact analysis, putting the samples beside tier-one competitors, measuring light bounce, and noting how a tactile detail like micro-embossing changes shopper posture when they reach for the box. That work ties packaging branding comparison back to the balance sheet and the shopper’s instinct to reach for a familiar warmth.

By tying packaging branding comparison results to actual consumer behavior—in-store conversion lifts, e-commerce add-to-cart spikes, or influencer unboxing minutes—I can argue for the option that keeps profitability and prestige aligned. I document not only the best-looking option but the one that performs under logistics pressure, because a gorgeous box that collapses in transit costs far more than the mere difference in lead time. Realigning procurement conversations around these nuanced outcomes keeps my stakeholders invested and keeps the comparison from turning into a purely aesthetic exercise.

Process & Timeline for Packaging Branding Comparison

Week 1 focuses on data: baseline specs, budgets, and brand expectations. A two-page document outlines mandatory finishes, tooling needs (die-cutting, embossing, foil), and the brand story anchored around product packaging. No supplier earns a pass without promising a printed sample within five business days plus a photo report from their inline proofing camera; that photo gets stamped with the date and inspector initials in the timeline tracker. Delays are noted under “timeline reliability,” where I log the exact hour of any communication—once a supplier said “we’ll try to ship on Friday” and then immediately followed up with “or Monday,” and that wishy-washy timing earns a big red flag in my notes.

Week 2 transforms into sample nerd time. Every board and sleeve ends up in the same lighting booth—5000K LED daylight, 4100K fluorescent for office mimicry, and 3200K warmer ambient light—so the comparison stays apples to apples. Each version receives a clear label, weight measurements to 0.1 gram, and drop tests that mirror ISTA recommendations; the report lists the drop height, acceleration, and whether the structure rebounded or cracked. Occasionally I bring in a third-party consultant; once a creative director at the NYC design firm caught a Pantone mismatch I’d missed because I was too close to the project, and that consultant saved us from rewriting an entire marketing booklet (I still owe them coffee and a hug).

Week 3 maps process timelines. Proof rounds, expected revision cycles, lead time, and revision policy share space in a spreadsheet that also holds supplier notes from the initial visit in Guangzhou or the LA binding facility. A vendor might impress visually but falter if they cannot commit to a six-week lead time when you need a rush run. I push for clarity: no “15-20 business days depending on substrate.” Instead, I request fixed windows such as 12-15 business days from proof approval, keeping marketing and operations aligned and allowing procurement to plan freight bookings. (And yes, I have threatened to send my spreadsheet as a bedtime story to procurement when they question the deadlines.)

Cost, Pricing & Value in Packaging Branding Comparison

Pricing never stops at per-unit costs. Tooling, printing plates, proof charges, and freight all feed into the total. In a recent packaging branding comparison between a Shenzhen printer and a Los Angeles binder, I negotiated a $0.22 reduction by bundling four SKUs sharing the same finish after the comparison revealed both already had the UV setup in place; the shared setup cut the plate count from four separate runs to a single four-SKU master, saving roughly $1,700 in tooling. Clear documentation showing overlapping specifications makes bundling effective, and those “aha” moments remind me why I keep pestering suppliers for detail.

Detailed quotes that break down every line item are essential. Some factories tout free shipping while quietly padding setup fees. I once caught a supplier doing that with a $0.18/unit job; after questioning their paperwork, they admitted the “free” shipping hid a $1,200 freight prepayment buried in plate costs assigned to “special handling.” Brands deserve to know the true spend, because guessing at fees is how you end up in surprise board meetings with CFOs who smell hidden costs from miles away and call for backup numbers from procurement.

Compare ROI as part of the packaging branding comparison. Cheap packaging can kill conversion by misrepresenting a brand’s story. One client switched from a basic matte box to a linen-textured sleeve with micro-embossing, which raised the unit cost by $0.10 but increased conversion by $1 thanks to heightened perceived value on the shelf; the lift was documented over six weeks of POS testing across three stores in San Francisco, Austin, and Chicago. Documenting that before-and-after in the comparison made the boardroom decision easy. Honestly, the math was so satisfying that I considered framing the spreadsheet and hanging it next to the quarterly KPI board.

A table keeps the comparison transparent:

| Supplier | Per Unit | Tooling & Setup | Lead Time | Value Notes |

|---|---|---|---|---|

| Shenzhen Huapack | $0.38 | $620 for plates | 12-15 business days | Consistent color, but higher tooling |

| Guangdong Evergreen | $0.61 | $410 for plates | 15-18 business days | Premium varnish, slower turnaround |

| LA Studio Binder | $0.89 | $720 for emboss dies | 10 business days | Great short runs, domestic freight |

The comparison highlights more than price; you may pay more per unit but save on freight or gain quicker turnarounds. Always note whether the price includes FSC-certified paper, especially if sustainability claims appear on the packaging, because the FSC chain-of-custody number (such as FSC-C017543) needs to appear on invoices. Referencing external standards like the FSC’s chain-of-custody requirements reinforces why certain options cost more, and I remind teams that “eco-premium” is only an advantage if you can prove it on the label and in the materials spec sheet.

Common Mistakes in Packaging Branding Comparison

Skipping tactile testing remains a classic misstep. A glossy mockup can look stellar on a screen yet feel cheap the moment someone handles it while the surface shows micro-scratches under 10x loupe magnification. A client nearly shipped a run relying solely on digital proofs; when I grabbed the sample I pointed out the plastic-like click and the cheap sound during folding, and the board measured 260gsm instead of the specified 300gsm, causing that flimsy snap. The final run moved to heavier board, and that version sold out in two weeks. I’m convinced the only reason that first sample survived shipping was because no one dared to drop it.

Another mistake crops up when comparisons happen at different scales. A supplier might shine at a 3-by-3-inch label but fail when the dieline expands to a 10-by-14 card with a 5mm bleed. Always insist on scale-accurate samples so distortions remain visible early. I learned that the hard way when a packaging branding comparison revealed ink saturation changed from 280% to 360% once we scaled the design, literally breaking the look of the custom printed boxes. I still wince when I think about the designer’s face when we realized we had only seen the mini version.

People often ignore supplier process documentation. If your comparison only assesses the finished part without understanding how each vendor handles revisions, hidden delays slip through. Request a documented workflow stating how many proof rounds are included, what revision times look like, and what triggers extra charges. I once faced a $350 rush fee because the supplier’s policy allowed only two revision rounds; knowing that beforehand would have altered the comparison notes, and the suspense of waiting for permission to make a small tweak felt like begging for a lifeline.

Expert Tips for Packaging Branding Comparison

Bring a third-party consultant or trusted brand partner to the comparison meeting. A creative director I respect from the NYC firm once caught a Pantone mismatch I simply could not see because I was buried in spreadsheets; their visit happened during the Week 2 booth review, and the difference between Pantone 7624C and 7625C was enough to delay the run by three days but saved us from a $6,000 reprint.

Keep a comparison spreadsheet with weighted scores. I assign points for material fidelity, aesthetic alignment, production reliability, cost, and sustainability; the weights shift depending on the campaign—sometimes sustainability resolves ties, sometimes cost does. The spreadsheet includes columns for Delta E readings, stiffness (gf/15mm), and lead time precision; the weights allow me to say, “Supplier A scored 92 on color fidelity while Supplier B scored 78.” There’s something satisfying about the spreadsheet swagger when it proves a point.

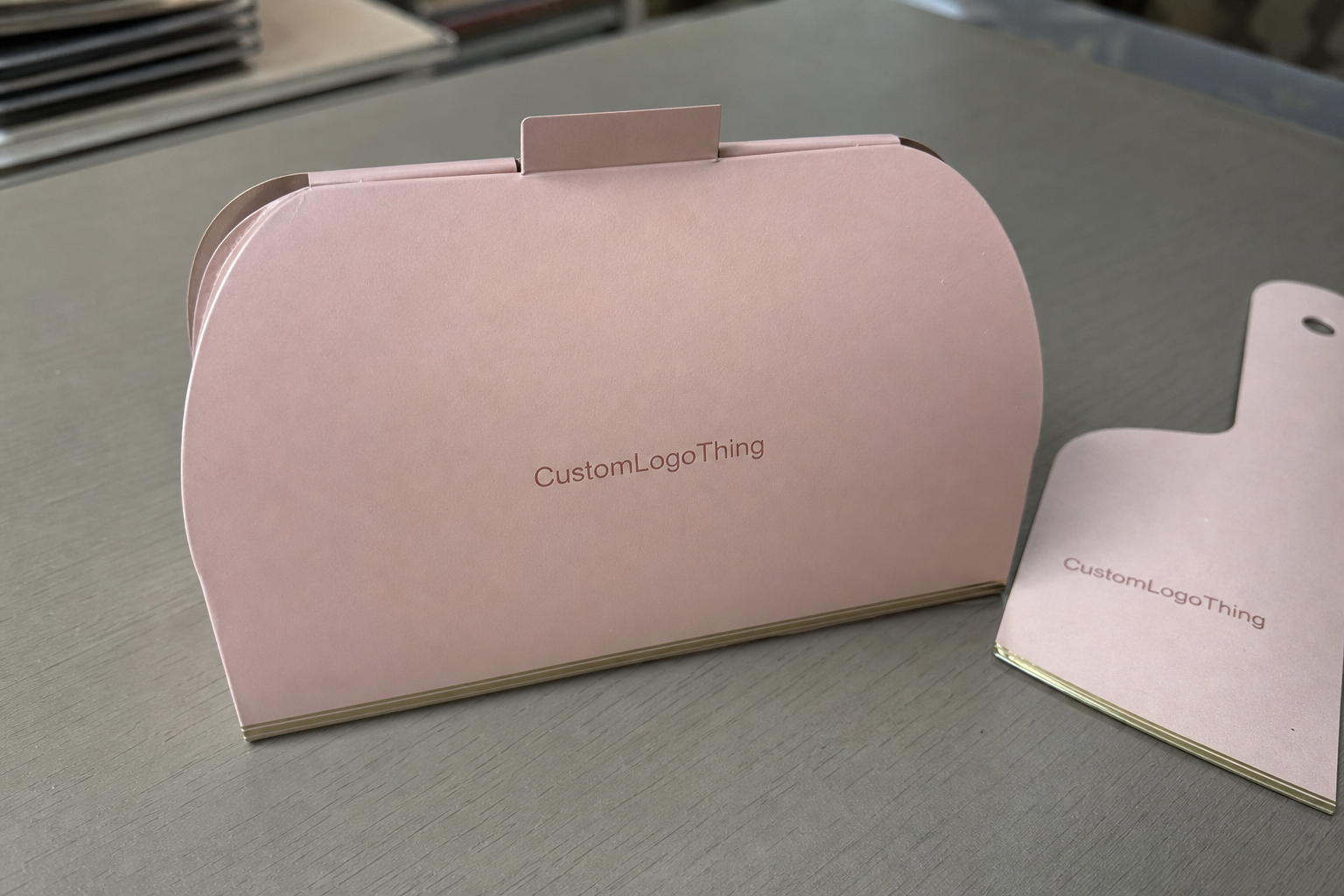

Apply real-life scenarios: drop each sample into your retail packaging display, slide it beside tier-one competitors in a 36-inch shelf slot, and capture images from a shopper’s perspective using a 50mm prime lens. I once staged a mock shelf with a beauty launch and sent photos to my client’s COO; the COO immediately noticed how the custom boxes leaned toward luxury when compared to the others. That kind of tangible evidence matters, especially when you’re advocating for the priciest option in the room.

Next Steps for Your Packaging Branding Comparison

Draft your comparison brief carefully: include brand story, mandatory specs, color palettes, finish preferences, desired tactile cues, and planned distribution channels. This document becomes the source of truth for every supplier, whether it goes to our Shenzhen facility or a domestic printer in Chicago. It should mention design cues like windows, embossing, or clear coats, and include references to dieline version numbers (V4.2, V4.3) and run lengths. I’ve learned to treat that brief like a contract with your future self—promise to revisit it every time a new change pops up.

Schedule sample deliveries from at least three vendors and gather creative and operations teams for a hands-on review. Invite your marketplace manager for insights on how the packaging will sit among competitors, and let procurement speak to contract windows, especially the 12-15 business day slots when freight needs booking. Document everything with photos, notes, and tool readings, attaching those files to your comparison brief with timestamps. (And yes, I mean every single thing, because “I’ll remember” is a slippery slope into chaos.)

Score each sample, capture real photos, and negotiate using the documented differences. After collecting the data, return to the suppliers. I often reference Case Studies where we balanced finish with timing or the Custom Labels & Tags page when label performance factors into the comparison. Use those facts to push for better tooling, faster timelines, or clearer lead times. Then choose the partner that best marries quality, cost, and timing in your packaging branding comparison.

What is a packaging branding comparison checklist?

Create a list of specs such as materials (e.g., 350gsm C1S, 90gsm BOPP), coatings (soft-touch, matte UVC), cost brackets, lead times, and brand alignment criteria. Add sensory checks—touch, sound when handled, and how stiff the board feels under 500gf force—and functional tests like stacking at 1.5kg or drop durability measured against ISTA 3A. Score each vendor so you can see where the value differences lie, keeping that document ready for procurement and marketing. I keep mine on a clipboard that’s seen more factory floors than my sneakers have seen airports.

How do I compare packaging branding across suppliers?

Request identical samples backed by a clear brief and inspect them under the same lighting conditions (5000K daylight, 4100K fluorescent, 3200K warm). Use color readings, weight measurements, and drop tests inspired by ISTA standards to keep evaluations objective. Document everything so stakeholders understand why certain options performed better and note which supplier consistently hit the ±0.15mm tolerance. Personally, I get a rush when everything aligns and the comparison summary practically writes itself.

Which metrics matter in a packaging branding comparison?

Color accuracy (Delta E below 2.0), print registration, and material quality (stiffness, tear resistance) drive aesthetics. Lead time, revision policy, and minimum order quantities demonstrate process reliability, as does whether the mill uses ISO 9001 procedures. Cost per unit, tooling fees, and freight round out financial clarity—these figures keep everyone honest. If your numbers don’t match reality, the comparison is just wishful thinking.

Can packaging branding comparison help lower costs?

Yes. Identifying redundant finishes or overly complex dielines trims costs without cutting perceived value. Bundling SKUs with similar specs often unlocks volume discounts and eliminates duplicate plate charges. Knowing which suppliers consistently hit the brief prevents expensive reworks and wasted rush fees. I’ve literally seen the difference between a calm CFO and one on the verge of a meltdown hinge on this clarity, especially when we avoided a $1,200 expedited run.

How often should I run a packaging branding comparison?

Before every rebrand or major SKU launch to ensure packaging still matches your positioning. After any unexplained dip in conversion—packaging might be the culprit, as shown by the January-to-February case where conversion fell 7% before we changed the sleeve. Also whenever you consider a new supplier or plan a material change. Trust me, nothing hurts a launch more than assuming “we’ve done this before” and discovering the boring truth that the packaging has, in fact, drifted.

For future launches, keep this packaging branding comparison process in your toolkit; it turns quality, brand identity, and logistics into measurable advantages, with documented runs and supplier-driven metrics. (And yes, I still geek out over the spreadsheets.) That discipline also keeps me honest about adhesives, ink stability, and the small details that feel invisible until they go wrong.

Yes, a well-documented packaging branding comparison feels kinda like the closest thing to a guarantee that the unboxing experience mirrors the story told in the boardroom, especially when the same samples travel from Hong Kong to Los Angeles to validation labs in Chicago. Disclaimer: climate, adhesives, and even the person doing the fold test can sway final feel, so treat every report as a living document and note those variables. I still double-check humidity readings before a rush run because I’ve seen tack change with a 5% swing in relative humidity.

Honestly, the reality always beats the hype when you do the homework—and nothing feels better than proving that with a stack of samples, complete with measurement reports and IWCO certifications. So I’m gonna keep reminding teams to document every tactile note, measure every Delta E, and log each supplier promise before the contract gets signed. That final move—the summary, the shared folder, the decisive supplier pick—becomes your actionable takeaway so the packaging branding comparison doesn’t live in hindsight.