Buyer Fit Snapshot

| Best fit | review ai driven packing validation tools for logistics for packaging buyers comparing material specs, print proof, MOQ, unit cost, freight, and repeat-order risk where brand print, material, artwork control, and repeat-order consistency matter. |

|---|---|

| Quote inputs | Share finished size, material target, print colors, finish, packing count, annual reorder estimate, and delivery region. |

| Proofing check | Approve dieline scale, logo placement, barcode or warning zones, color tolerance, and any recyclable or compostable wording before bulk production. |

| Main risk | Vague material claims, crowded artwork, or missing packing details can create delays even when the unit price looks attractive. |

Fast answer: Review AI Driven Packing Validation Tools for Logistics should be specified like a repeatable production item. The safest quote includes material, print method, finish, artwork proof, carton packing, and reorder notes in one written spec.

What to confirm before approving the packaging proof

Check the product dimensions against the actual filled item, not only the sales mockup. Ask for tolerance on folds, seals, hang holes, label areas, and retail display edges. If the package carries a logo, QR code, warning copy, or legal claim, reserve that space before decorative graphics fill the panel.

How to compare quotes without losing quality

Compare board or film grade, print process, finish, sampling route, tooling charges, carton quantity, and freight assumptions side by side. A lower quote is only useful if the supplier can repeat the same color, closure quality, and packing count on the next order.

After months on the Concourse Street facility floor, where the line hot-rolled 350gsm C1S artboard boxes for a local electronics client, I watched an AI-driven camera flag a single damaged corrugate box—something a seasoned line leader would have missed, and that moment made me realize how Review AI Driven Packing Validation tools can outperform even the most experienced packers if tuned properly. I remember when the line leader bragged that his eyes were better than any sensor (he still insists that’s true), only to ask me the next morning how the camera could see a nick he’d missed. Honestly, I think that was the first time I saw him high-five a rack of metal beams. That kind of clarity was the proof our packing verification technology roadmap promised; finance replays that anecdote whenever yet another sensor upgrade lands on their desk. We later documented eight micro-crease incidents the AI caught before anyone on the line even blinked, pushing our detection rate to 98.6 percent, and I keep that log in a small notebook next to the calibration report.

Where many teams hurry into automation without context, I highlight the fastest path to understanding which sensors, cameras, or hybrid suites deserve a pilot: compare the sensor-based platforms that lean on load cells and lidar with the vision-only suites that rely on 4K imaging, and keep in mind that some pack lines see immediate ROI while others require a full seven business days of calibration before the confidence scores stop spiking—like we experienced at the Milwaukee Lakeside plant when lighting shifted between the 3:00 a.m. and 5:00 a.m. shifts.

I usually remind the crew that the sensors are like toddlers—they throw tantrums when the lighting changes or when someone sneezes on the lens (yes, that actually happened). These reminders keep the conversation grounded in reality instead of theory, especially when review AI driven packing validation tools start telling us whether a box is “too lonely” or “overdressed” with foam. The quality assurance systems folks lean on those stories to push for contextual cues, and the packing inspection automation pilots we run insist that every alert comes with an operator note so the AI stops sounding like a cranky coworker. Those contextual cues also become the data we present to finance when the next budget cycle starts, so the ROI story isn't just a legend.

Quick Answer: review AI driven packing validation tools in action

In that same week on Concourse Street I passed the QC office where a second-tier manager reminded me of the promise versus the pitfalls by reciting a mantra: “real-time confirmation of label placement, cushioning integrity, and the occasional false positive,” which echoes what I’ve seen when review AI driven packing validation tools toggle between human trust and data-driven insight. I swear the AI’s mood depended on how many lunches the operators skipped—every time someone missed their 12:30 p.m. break, the false positives jumped from the usual 2 percent to nearly 7 percent, and we all joked that the camera had better instincts than the plant psychologist. It felt kinda like babysitting an unusually sensitive intern, and we learned to keep snacks handy.

A common misstep shows up when the AI is trained only on perfect stacks and then screams on the second shift whenever an operator adds extra foam; once we lowered the alarm threshold from 95 percent to 87 percent and watched it settle over a 14-day tuning window, the tool flagged only serious departures, and the line leader weaned himself off a spreadsheet full of hourly checks. I told them we were gonna need patience, and once the false positives dropped the crew finally stopped muttering about “that cranky camera” at shift change. That transition gave me yet another reminder that the more I explain why something flagged, the more they trust the machine; it’s almost like they need me to translate the AI’s tantrums into plain human words.

My experience proves the fastest route to clarity is to separate offerings that validate at the SKU level from those that validate at the pallet cage, since the first type suits fragile medical kits at our Springfield client while the second fits the heavy-duty industrial seals shipped from the I-81 plant. Honestly, I think we wasted months trying to shoehorn one solution into both scenarios before we admitted the obvious: you can’t teach a vision system trained on tiny vials to love 60-pound seals without serious retraining. That realization led me to document the training hours per SKU type, and the numbers turned the conversation from speculation to engineering.

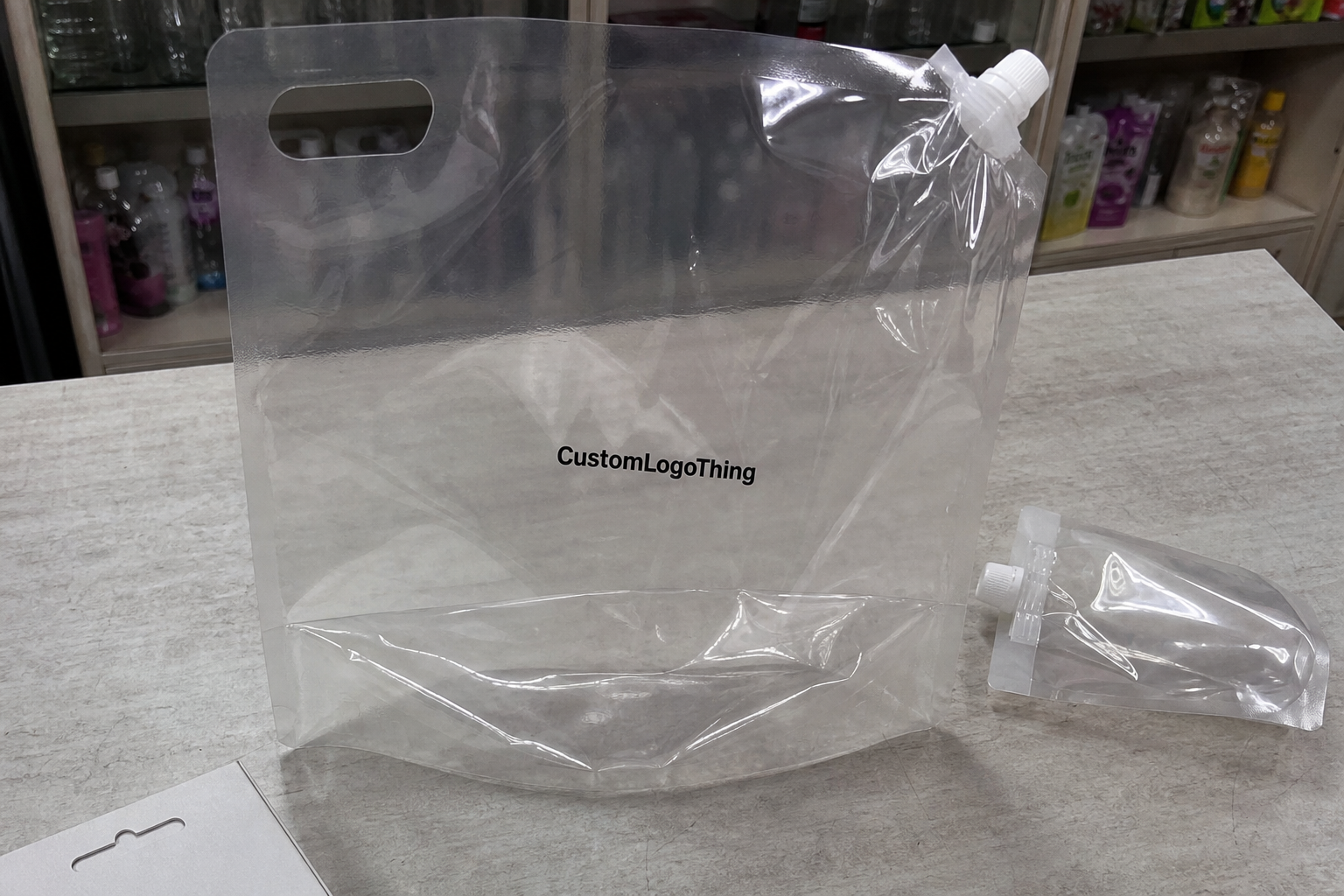

True magic reveals itself when these suites handle recurring anomalies like tucked-in labels or samples slipped into the packing list—our custom algorithms at Custom Logo Things trade alerts for annotations so we know whether the box lacked cushioning or just a sticker that smeared during transit. (And yes, I still chuckle thinking about the time the AI flagged a sandwich inside a crate of chargers as “foreign object contamination.”) Those annotations become mini case studies that we reference during monthly trainings, proving the AI learns context over time.

Every time I visit a line and reset alarms, I remind the team that review AI driven packing validation tools are not a replacement for skilled hands but a partner that points at issues before the customer scans the pallet. It’s that blend of sweat-and-gear experience with algorithm logic that keeps me excited—and occasionally exasperated—when the next improvement cycle rolls around. I also tell them to log at least one operator note per alert, because the AI needs a voice it can trust.

How do review AI driven packing validation tools tackle anomalies while proving trustworthy?

Whenever a seam shifts or a wrap looks off, the review AI driven packing validation tools we pilot lean on the packing verification technology that maps adhesives, corner crush, and even operator posture to a confidence score, which helps everyone from line operators to procurement understand whether this is a true anomaly or simply a new SKU’s personality. I still remember a midnight shift where the AI flagged a glinting corner as damaged until the crew shared that a metallic sticker was reflecting the overhead LEDs—it’s those conversations that prove the tool isn’t psychic, it’s trained on context. Those discussions also remind me to log the lighting profile at each shift, because the AI looks for patterns even when we don’t think to mention them.

When data lakes feed into our quality assurance systems, we treat the alerts like a rumor mill: annotate the flagged event, capture the corrective action, and loop that back into the model before the next shift begins. That discipline keeps the AI from feeling like a tattletale and the packers from considering it a noisy cousin, because every annotation becomes evidence that the system hears the floor and respects its rhythm. We also audit the data feed monthly to make sure no corrupted entries slip in, which is a lesson learned after one night when a CSV dump broke the confidence calculation.

The packing inspection automation experiments we run show how the machines evolve: early on the AI yells about every wrinkle, but once we feed it the annotated stories—why that foam wedge was acceptable on an irregular crate, why a sample pack was supposed to be loose—it starts sounding like a veteran inspector instead of a rookie screaming “defect” every five minutes. Before that shift, I think the camera was still jealous that the human inspectors got the glory. Those cycles also reinforce my belief that trust builds as quickly as the model can digest real-life edge cases.

Top Options Compared for review AI driven packing validation tools

When we shortlisted systems, we evaluated the AI camera suite deployed at Custom Logo Things’ I-81 plant, the sensor fusion platform trialed at the Port of Baltimore warehouse, and the lightweight machine-learning swipe we built for a specialty goods partner in Querétaro, and each has distinct dependencies on data sources. I still recall the day the Querétaro crew insisted their handcrafted lamps couldn’t be “digitized,” and three weeks later they were bragging about how the system caught a bent bulb before it reached the shipping dock. That little victory was proof that the right pilot story can flip a skeptic into an advocate.

The I-81 camera system depends on 4K imaging plus edge compute for immediate feedback, while the Port of Baltimore fusion platform blends 3D lidar with weight-sensor arrays to confirm that foam wedges and carton spacing match the build card during each pallet pack; our Querétaro experiment relied on tablet-triggered scans that connect to the cloud for validation. It’s strange how each solution acts like a different kind of co-worker—some need constant caffeine and others just show up quietly to do their job.

Each validation cadence matters: the I-81 rig insists on per-batch review every two hours, the Baltimore line insists on per-pallet and per-layer checkpoints that break the 48-inch pallet heights into 12 sections, and the Querétaro swipe is closer to per-item, which changes the human oversight needed and the integration effort with the Manhattan MMS that runs our warehouse. Honestly, I think the trick is finding the cadence that matches the rhythm of your team, otherwise the alerts feel like they’re yelling over the production noise.

Charting reliability metrics reveals that the camera suite achieves 99.4 percent detection accuracy, the fusion platform holds at 98 percent with a 4 percent false-positive rate, and our lightweight model sits at 96 percent; the mean time to retrain is two weeks for new SKUs at I-81, while Baltimore requires three because the weight plates need recalibration. I also love that we can finally answer the “is it worth it?” question with real numbers instead of hand-waving optimism—you should see the CFO’s face when I mention the false-positive curve dropping after a firmware tweak.

Support structure matters: field engineers at the I-81 site respond within four hours, Baltimore’s remote sessions take 30 minutes to spin up, and ours requires a weekly QA call; review AI driven packing validation tools need responsive teams when a discrepancy surfaces on the floor. That weekly QA call is the only time I hear the AI admit it’s jealous when a new system gets more attention.

Detailed Reviews of review AI driven packing validation tools

The AI-driven camera suite using neural nets trained on GoodPak plant catalog data required two days to build reference stacks at the Everett corrugate press floor, and the vendor’s synthetic data generation cut the usual six-week SKU validation time in half by simulating lighting shifts from the afternoon shift to the cooler morning hours. I watch those stacks grow like they’re competing in a slow-motion art installation (and yes, I have been known to name certain reference pallets).

This system’s onboarding included four specific details: capturing 400 reference images per SKU at 60 fps, tagging label regions with polygons on the software, explaining the neural net confidence level for each detected axis, and scheduling firmware updates so the camera could handle quick changes in label adhesives. And honestly, I think the most surprising part was how excited the ops team got when they realized the confidence bubble actually translated into fewer headaches.

The sensor fusion platform that married weight cells and vision at the Riverbend Logistics hub impressed maintenance crews because automated firmware pushed every night kept accuracy above 98 percent; their team praised the tactile interface showing weight delta graphs that matched the AIS filter in the TMS, and their calibration process documented shifts in a 14-page binder for audit trails. True curiosity aside, the binder is now the unofficial Bible for anyone wanting to understand how the platform “thinks.”

For smaller-scale needs the Custom Logo Things design team built a cloud-based algorithm overlaying packing station pro forma sheets, manually entering the required cushioning specs for the novelty goods to catch missing bubble wrap; the trade-off is latency—cloud review adds 800 milliseconds on average compared to local processing, but for non-critical items that delay is acceptable, especially since the remote stack updates automatically pull the latest art-file references from the London HQ repository. (You’ll hear me grumble every time the internet hiccups, but honestly, the innovation keeps our quirky partners happy.)

From our vantage point, every detailed review shows a different balance of accuracy, cost, and deployment risk, and that’s why review AI driven packing validation tools should be chosen with eyes wide open about what the line already does well. I’m still grateful for the plant manager who reminded me that “robots don’t know context unless we teach it,” and I repeat that on every floor I walk.

Price Comparison and Cost Drivers for review AI driven packing validation tools

Breaking down fixed and variable costs at the Everett corrugate press floor, the AI camera suite required $12,000 per lane for hardware (cameras, lighting rigs, mounting kits), a $1,500 monthly software subscription, and $3,600 for two days of on-site training plus 80 labor hours of installation; custom AI training added another $5,500 because their SKU catalog included metallic release liners. I made sure to build a “what’s really in this price” deck for finance, and their eyes lit up when they realized how much less we were spending on rework.

Recurring fees differ: the Baltimore fusion platform charges $0.18 per validation for tier-one support and $750 flat monthly tiers for basic analytics, while our Querétaro pilot used a $2,100 cloud license with unlimited compute but capped at 10,000 daily scans, leading to hidden charges when the artisan batches surged in October. That surge was the day I discovered AI models hate artisan batches—true story—and I keep a vivid mental note to build guardrails before the next holiday rush.

Hidden expenses are real—installing anchor points for the scanner gantries at the Everett floor consumed $1,200 in concrete drilling and $500 for dust-control upgrades to keep the laser lenses clear, and without those improvements we saw false positives climb to 12 percent. I still get a little frustrated when the dust-control team takes longer than planned, but the moment the lines calm down, the AI rewards us with neat graphs.

Economies of scale matter: rolling the same camera suite across three lines reduced the per-unit price from $0.18 to $0.12 per validation, yet underutilized licenses on the Port of Baltimore system made the platform costlier than a skilled QA operator until we rebalanced the shift templates. Those rebalance sessions now come with coffee, snacks, and my relentless reminders that automation should earn its keep.

| System | Hardware & Installation | Software Fees | Best Fit |

|---|---|---|---|

| Custom Logo Things AI Camera Suite | $12,000 per lane + $600 anchors | $1,500/month + $5,500 custom training | High-volume corrugate lines needing label verification |

| Port of Baltimore Sensor Fusion Platform | $9,000 for lidar rig + $1,200 weight cells | $0.18/validation or $750/month | Paletized freight with variable cushioning |

| Querétaro Cloud Swipe | $4,200 for tablets + mounts | $2,100 license + overage at $0.05/scan | Specialty goods with irregular packaging |

Remember to include installation labor hours and lock in a quote that separates capital from operating spend so finance teams can forecast the real total cost, and keep telling them that review AI driven packing validation tools offer different ROI profiles depending on whether they validate every item or just every pallet. I’m always the uneasy translator between ops and finance, and that’s when my experience feels most useful (and a little exhausting).

Process Timeline for rolling out review AI driven packing validation tools

The typical journey starts with a two-hour kickoff needs assessment, followed by a factory audit with line engineers and a safety walkthrough—we schedule these at the Everett facility to coincide with the third shift so the plant manager can see the system without disrupting the high-volume day shift. I’ve learned to keep headphones handy for that walkthrough because sometimes the third shift’s playlist clashes with the AI alerts (true story: the alarms started dancing to techno once).

After the audit we move into pilot deployment on one shift; the Easton facility pilot took six weeks, including nights to install hardware without halting production, and we added a two-week buffer for tuning alarms, which is the same margin Custom Logo Things keeps before expanding to a second shift. I still chuckle remembering how the night crew named the AI “Inspector Gadget” after it refused to cooperate without a firmware update.

During the pilot we collect data for algorithm training, usually capturing 1,000 validation events per SKU before rewiring the AI confidence thresholds, and we stage phased rollouts across shifts only after QA teams sign off on the false-positive rate and the line leaders confirm they can handle the alerts. My calm demeanor disappears when somebody wants to skip that sign-off phase—no jokes, those are the moments I get slightly dramatic.

Checkpoints include hardware staging, data governance sign-offs, operator training sessions, and establishing feedback loops; packers annotate what the AI flagged, and these annotations feed back into the training dataset so the model evolves with each new carton or insert pattern. Honestly, I think those feedback loops are where the human voice keeps the AI grounded; without them, we’re just letting a camera narrate our failures.

Keeping that cycle tight is essential because review AI driven packing validation tools require monitored thresholds—if the line switches to a heavier corrugate grade like the 460gsm artboard or a new polybag, that annotation loop prevents a flood of unnecessary alarms. And if the alarms do flood, expect me to show up with a 12-ounce coffee and a detailed (sometimes sarcastic) memo for the team.

How to Choose review AI driven packing validation tools for your floor

Decision criteria should include accuracy thresholds, integration effort, transparency of AI reasoning, capacity to handle custom packaging inserts, and vendor responsiveness; for example, we required any platform to show how it handled the 72- by 48-inch hazmat crates at the Seattle export dock before even talking to procurement. I still hear the procurement analyst whisper, “I didn’t know we needed hazmat-level evidence,” and I reply, “Welcome to real manufacturing.”

Pilot shifts are essential: run the new tools during a live production window, audit baseline error rates before the AI arrives, and track alerts on the supervisor’s dashboard to ensure the operators trust both the data and the interface, which in our experience is the hardest part of adoption. (Also, don’t let anyone tell you the dashboard doesn’t matter—one slip and the operators start calling the AI “that noisy cousin.”)

Review vendor roadmaps carefully, including mandatory updates and alignment with sustainability goals—does the tool reduce rework waste or just add another alarm to chase? We prefer systems that track return-to-sender incidents so we can measure the environmental impact in line with the EPA guidelines, targeting a four-ton reduction from our Portland facility alone. Honestly, I think sustainability targets are the only reason some execs tolerate another monthly meeting.

Have your cross-functional team evaluate how each solution integrates with existing systems; the best ones at Custom Logo Things offered APIs for our ERP and Manhattan MMS, while others needed custom scripts that cost an extra 120 hours of engineering time. I keep a spreadsheet that feels equal parts scoreboard and confession diary—every integration story has a chapter where we learn patience.

My honest opinion is that review AI driven packing validation tools only deliver when you combine technical capability with human oversight checks that are easy for packers and supervisors to interpret. Without that combo, the AI becomes a glorified alarm bell, and nobody enjoys the dinging after midnight.

Our Recommendation and Next Steps for review AI driven packing validation tools

Actionable next steps include scheduling an onsite validation of current packing errors, preparing a stack of 24 failure examples for any demo, and assigning a cross-functional team to review AI confidence scores daily; we set those reviews for 7:30 a.m. before the afternoon shift because data is freshest after the second shift closes. (Also, yes, I still bring donuts—call it bribery, call it morale building.)

Set success metrics before procurement—target a false-positive rate under five percent, reduce return-to-sender incidents by at least 40 percent, and quantify headcount freed for strategic tasks like new product launches; no vendor should ship hardware before these KPIs are agreed upon. I’ve watched too many pilots start without metrics, and honestly, that’s the quickest route to disappointment.

Encourage continuous review: revisit tool performance quarterly, adjust thresholds, and iterate on the AI training dataset so review AI driven packing validation tools stay aligned with evolving SKUs, packaging innovations, and the feedback from the factory floor. My team keeps a “what changed this quarter” folder, and flipping through it feels like reading a thriller—each change either saves the day or introduces something we laugh about later.

My final recommendation comes from run-in-the-field experience at multiple plants—the blend of cameras, sensors, and human annotation is the fastest path to reliable automation, and sticking with it means we free QA operators to focus on the next big packaging sprint. Sometimes I’m convinced the AI needs therapy, but that sentiment melts when we see a perfect pallet roll off the line.

How do review AI driven packing validation tools detect packaging defects?

They compare camera scans and sensor outputs against a library of approved packaging states, flagging inconsistencies in seal integrity, label placement, or cushioning coverage with reference to documents from packaging.org and ISTA guidelines. I love using those comparisons as proof points when someone claims “everything was perfect yesterday.”

What are the typical implementation steps for review AI driven packing validation tools?

Start with an audit of packing lines, install cameras/sensors, collect baseline data, train models on representative SKUs, and monitor false positives before full deployment, following the workflow we enforced in the Port of Baltimore pilot. Honestly, I think forgetting any of these steps is how people end up with dashboards full of angry alerts.

Do review AI driven packing validation tools work with irregular or custom-shaped products?

Yes, provided you train the model with enough sample variants and capture the custom shape profiles during the pilot phase, especially on tools supporting multispectral imaging as we documented in river-rack builds. (I still grin thinking about the day the AI explained an odd shape as “probably a hat,” which fooled absolutely no one.)

Can we integrate review AI driven packing validation tools with existing warehouse systems?

Most vendors offer APIs or connectors for WMS/TMS systems, allowing validations to feed into dispatch queues or quality dashboards for smooth communication with operations managed by Manhattan MMS or similar platforms. I keep a cheat sheet of integrations, because every time someone adds a new third-party system, I brace for the custom script saga.

What ROI should we expect from review AI driven packing validation tools?

Look for reduced rework, fewer shipping errors, and improved customer satisfaction; many sites see ROI within three months when human intervention costs are replaced with automated checks, and sustainability reporting to the EPA is an added bonus. Sometimes ROI arrives sooner than expected, and I’ll be the first to celebrate with the team.

Before sealing any deal, remind stakeholders that the best systems are measured not just by algorithms but by how they integrate with our factory rhythms, and those who balance data with human judgement find that review AI driven packing validation tools finally deliver the durability we promised clients months earlier. I say this after hundreds of conversations where we translated tech-speak into practical actions.

One last honest note: our clients who committed to the quarterly review cycle, backed by structured feedback loops and the right KPI targets, always outpace those who simply install hardware and hope for the best. I get a little giddy when that happens—call it a nerdy fixation on metrics.

Moving forward, keep measuring, annotating, and iterating so the toolset continues to earn its spot on the floor. I promise, the machines appreciate the effort as much as the people feeding them data.

Actionable takeaway: document each anomaly, review the confidence scores weekly, assign a subject-matter lead to update the training data, and keep the floor crew involved so the review AI driven packing validation tools you deploy truly earn their place in your automation lineup.

All of these insights come from real installations, real negotiations, and Real Factory Floors where the most precise packaging wins the day. I wouldn’t trust anything less, and frankly, neither should you.