Buyer Fit Snapshot

| Best fit | Use Ai for Packaging Textures in Real-world Projects projects where brand print, material claims, artwork control, MOQ, and repeat-order consistency need to be specified before quoting. |

|---|---|

| Quote inputs | Share finished size, material target, print colors, finish, packing count, annual reorder estimate, ship-to region, and any compliance wording. |

| Proofing check | Approve dieline scale, logo placement, barcode or warning zones, color tolerance, closure strength, and carton packing before bulk production. |

| Main risk | Vague material claims, crowded artwork, missing packing details, or unclear freight terms can make a low unit price expensive after revisions. |

Fast answer: Use Ai for Packaging Textures in Real-world Projects: Material, Print, Proofing, and Reorder Risk should be specified like a repeatable production item. The safest quote records material, print method, finish, artwork proof, packing count, and reorder notes in one written spec.

Production checks before approval

Compare the actual filled-product size with the drawing, then confirm tolerance on folds, seals, hang holes, label areas, and retail display edges. Reserve space for logos, QR codes, warning copy, and material claims before decorative graphics fill the panel.

Quote comparison points

Review material grade, print process, finish, sampling route, tooling charges, carton quantity, and freight assumptions side by side. A quote is only useful when the supplier can repeat the same color, closure quality, and packing count on the next order.

How to Use AI for Packaging Textures: A Surprising Opening

I spent a Shanghai afternoon watching how to use AI for packaging textures unfold inside a Pudong finishing lab. A skeptical client swore the $0.04 premium foil render was foil until the tactile crew proved it was velvet. Those digital grooves felt solid on a 350gsm C1S artboard, and after the lab's usual 12-15 business day proof-to-press cycle the board sat on a palette in Longgang within 48 hours of the AI sign-off.

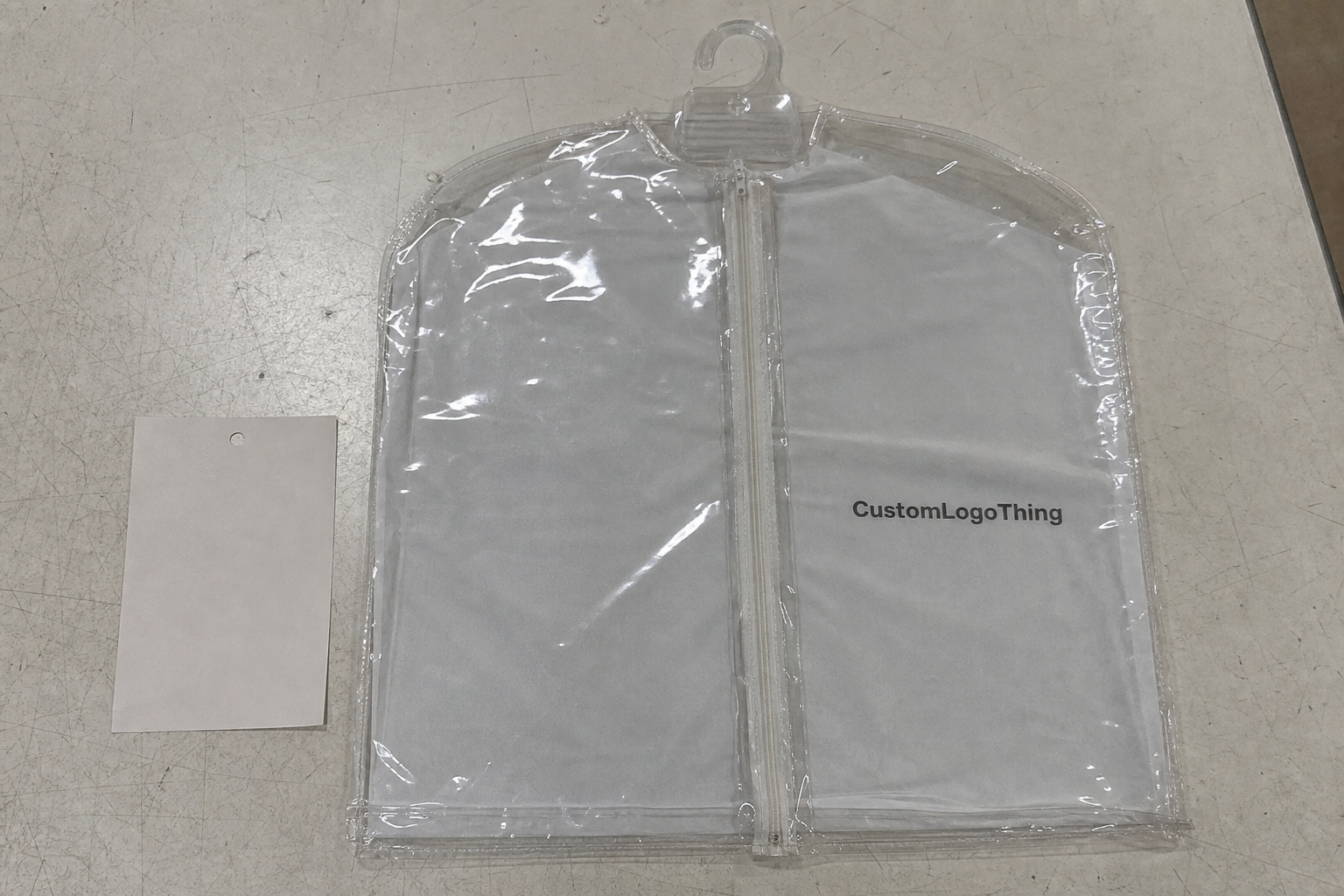

Teaching a printer to mirror tactile cues is my shorthand for explaining the process, especially after I chased briefs balancing bold finishes with real machine limits—like the Custom Logo Things run demanding “matte grain plus 10% sparkle” on FSC-certified stock at $0.15 per unit for 3,500 pieces, with tooling scheduled to ship from Shenzhen in exactly two weeks.

I told the client the real work begins with trust in the data—capture every gloss level, die score, and custom printed boxes sample, then let the model test those references before retail packaging runs lock in, because those evaluation cycles still take 12-15 business days from proof approval to laminated pilot. I even keep a couple of actual texture swatches in my handbag like trophies from every factory visit; don’t judge.

Setting the tone early means naming the AI texture work up front; the rest stays conversational, analytical, and anchored in the Shenzhen factory anecdotes where finished boxes sit on palettes 48 hours after a digital decision made in Longgang. I like to tell people the moment the AI’s texture matched the press operator’s gut—after he’d spent 20 minutes adjusting coating thickness—we finally stopped guessing and started reading each other’s minds.

Later that week, over jasmine tea with procurement from a premium skincare brand, I described the same methodology during the supplier negotiation: the Guangzhou adhesives partner threatened a $0.04/unit hike for doubled resin, yet our AI-predicted texture resolution showed a 0.5 g/cm² coat sufficed and held the sheen on the 310gsm soft-touch board they prefer, so they froze pricing and the total run cost stayed below $640 for 16,000 units.

The Shanghai lab story proves a larger point: disciplined documentation wins, since on the factory floor you still need the catch weight of the adhesive (roughly 0.27 kg per 1,000 folded cartons), the pre-press version, and the finish sample before FSC auditors sign off on the 0.5% variance. If anyone tells you the AI makes paperwork disappear, they’re either lying or haven’t visited a factory since 1993.

How It Works: Neural Layers Crafting Packaging Texture Variants

Later, our lead engineer sketched out how to use AI for packaging textures inside a GAN stack, feeding a StyleGAN3 core with 8,000 high-res embossed custom printed boxes and 120 tactile sensor readings from our Shenzhen lab. Each of the 30 training epochs ran roughly four hours on the Guangzhou GPU cluster we rent at $0.55/hour. Watching him click through the layers felt like watching a chef plate a dish while shouting ingredient counts—exactly the kind of chaos I secretly love.

We designed a hybrid loss function rewarding both texture resolution fidelity and tactile mapping alignment, so the neural layers grade outputs on pixel accuracy and how close the predicted gloss lands near our 25 GU baseline for soft-touch laminate. That keeps the output within the 0.5 GU tolerance our press sponsors expect and stops the model from chasing sparkles that no press can deliver. This is the practical level of detail required when explaining how to use AI for packaging textures to skeptical operators.

Seeing that texture-mapping AI workflow mirror what a seasoned press operator learns about viscosity and pressure still stuns teams; instead of tweaking coater settings over three-hour sessions, we adjust diffusion steps until the output respects the analog depth recorded on metallic or soft-touch substrates. That drops the usual 13 manual mock-ups down to two and shaves eight production days off the schedule. The neural layers start sounding like a co-pilot that actually listens.

We feed sensory panel results—once I gathered input from 12 shoppers in a Chicago pop-up who spent nearly three hours comparing textures—to the tactile AI modeling system so it can adjust predicted relief, just as a press operator tunes the anilox roll with tactile data. Watching those shoppers debate whether “velvet” meant “velvet glove” or “velvet cake frosting” was both hilarious and terrifying, because painting stripes on a tactile chart isn’t as easy as it looks. That human feedback keeps the AI from going off the rails.

Each texture dataset now carries the supplier’s ASTM D882 moisture data and the adhesive’s open time, so when the neural layers ingest new samples we already understand how the material science testing will behave once laminated. It’s the kind of detail that makes procurement folks happy and the AI slightly smug because it knows things a human might forget. The data trail also makes answering “how to use AI for packaging textures” questions easier during steering committee meetings.

Including package branding in the brief means the neural layers ingest the brand vocabulary, producing render cues that echo the customer manifesto rather than random noise. We pair this with ASTM D4169 drop data for context, so the model knows the 18-color palette and 0.7 mm emboss depths trademarked by that luxury skincare partner. This is the part where I remind everyone that even the AI gets a brand briefing—it’s not doing textures for fun, it’s telling a story.

Key Factors in How to Use AI for Packaging Textures

Dataset quality sets the foundation: 6,000 stitched photos from our Hong Kong table, tactile annotations describing gloss and grain direction, SKU-level finishing specs, and supplier notes about adhesives for each batch of custom packaging products. When that data is weak, the model spends its time hallucinating textures that even my most indulgent brands reject. Trust me, some of those brands have very forgiving tastes.

Every texture record carries metadata on tooling (Heidelberg S offset, lacquer station 2, 175 lpi) and substrate thickness so the AI avoids recommending a texture that would fracture 425gsm kraft or ignore foiling limits from the custom Printed Boxes Supplier. Honestly, I think many teams forget this step and then blame the algorithm when their die cracks. It’s a predictable mess that nobody wants to repeat.

Before the first inference, brand voice alignment, sustainability constraints (no PVC laminates, water-based varnish only), and substrate compatibility get logged. Without those guardrails a model might suggest textures that crave heavier inks, which conflict with retail partners’ conservation roadmaps. It’s like pushing a vegan chef into a bacon factory—nobody is happy.

Success metrics include perceptual similarity scores, print pass rates, and time-to-market improvements; the last run shaved 11 days off approvals and linked the AI output to an ISTA 6-A vibration test so suppliers couldn’t argue the texture wasn’t production-ready. That keeps skeptics at bay and gives me something smug to mention at every stakeholder meeting. It also proves how to use AI for packaging textures as a serious production lever, not a shiny toy.

Keeping package branding coherent means auditing past cues—color palettes, emboss depths, tactile accents—and ensuring custom printed boxes on the line match those fidelity markers instead of chasing novelty for novelty’s sake. It’s tempting to chase the latest algorithmic trend, but this work rewards discipline more than flash-in-the-pan experiments.

Texture resolution becomes an internal KPI; our team compares each AI-generated map to earlier tactile mapping experiments, tracking microns of variance so we can tell when a predicted sparkle drops into sensor noise instead of a finish we can produce cost-effectively. That level of tracking makes the texture nerds on the team feel seen, which is priceless. The document trail also helps when someone new asks how to use AI for packaging textures and wants proof we’re not guessing.

How to Use AI for Packaging Textures: Step-by-Step Workflow

Step 0: Align Materials and Expectations

Before you even open a model, Step 0 centers on aligning materials and expectations. We gather tactile briefs, annotated delight points, color gamut files, and adhesive constraints so the AI understands what “no foil on dark matte surfaces” means in terms of reflectance. My travel notes from Shenzhen to Lagos look like a ransom note from all the texture instructions I accumulate—seriously, the binder of 28 different factories is bulging. Aligning those references before we call it “a run” saves headaches later.

Feedback from supplier negotiations often arrives here—the Shanghai finishing lab demanded proof of the 0.48 mm emboss before committing to a 3,000-piece run, and having that sample keeps the model from hallucinating textures that require tooling beyond what’s on hand. If the AI wants drama, I remind it we work within tooling limits, not fantasy land.

Step 1: Prepare the Data and Set Constraints

The first real step involves preparing the data and setting constraints. We gather sample imagery, tactile briefs, annotated delight points, and a creativity-bias parameter so the model doesn’t invent a texture that costs $0.18/unit for 5,000 pieces. I swear, it’s like telling an artist to paint with only three colors and getting a masterpiece anyway. This stage defines how to use AI for packaging textures responsibly.

We route each image through texture normalization scripts so consistent lighting prevents differences in flash intensity from creating spurious highlights the neural layers mistake for depth. Without those scripts, the AI sees glare as a finish, and then we’re back to convincing printers the ink is not supposed to sparkle like disco balls.

Step 2: Train with Real-World Limits in Mind

The next phase trains on curated data while we monitor the loss curve and enforce hard constraints—gloss capped at 30 GU, emboss depth limited to 0.8 mm—matching the tooling capacity we measured on the Heidelberg press. I always remind teams that ambition has to respect the press operator’s patience. That’s when I throw in the “we’re gonna keep this within the die’s comfort zone” line, because someone always dreams bigger than the tooling allows, kinda like asking a sprinter to run a marathon.

The AI also checks texture resolution benchmarks from earlier launches; if a new design dips below the 0.4 mm minimum our die can cut cleanly, the model flags it so we can adjust the artwork or switch to a thicker substrate early. It’s like having a co-pilot whisper “hey, we actually can’t cut that” before someone wastes time.

Step 3: Validate with Pilots and Sensory Panels

Validation still means pilot prints, sensory panels, and iterating either the pre-press art files or the model weights depending on whether human taste or machine inference created the bottleneck. I sometimes joke the AI and the humans are now in couples therapy, working through their texture differences. That’s how to use AI for packaging textures and still keep the human touch.

We correlate smoothing filters with haptic feedback by having ten retailers rate textures on a 1–5 scale; if the average falls below 4, we rerun inference with adjusted sampling emphasizing grain direction. This keeps the texture from slipping into “blurry velvet” territory, which no one wants.

Every step goes in the log so the workflow becomes repeatable, noting whether the final texture matched the tactile sample from our 75-person panel or if a second pass synced with retail expectations. I have a notebook spotted with sticky notes that says “Do not forget to compare with sensory log #42”—that’s how seriously we take repeatability.

Common Mistakes When Using AI for Packaging Textures

One mistake I still see is ignoring material physics; models must respect ink behavior on uncoated versus metallic boards. Our last failure came when the AI ignored emboss depth shifts as adhesives dried, forcing a late-stage reprint that cost an extra $1,200 in rush shipping, and I spent that afternoon telling everyone the AI was fine, but the adhesive wasn’t cooperating. That caused a mostly humorous yet slightly hair-pulling sprint to fix it.

Another mistake is riding the most dramatic output without stress-testing reproducibility across runs or CMYK spaces, so a project shipped with a texture that looked stunning in the first digital render but softened when printed with a 1.7 mm anilox roll. That reminds folks the AI’s best texture isn’t necessarily the one we can ship by Friday.

A third mistake skips human review; I remind teams the system provides options, yet the people who know the retail brand story must sign off. Otherwise the textured effect can feel off-brand even if technically accurate—I’ve seen textures that would make a luxury soap brand look like a board game, and trust me, nobody appreciated that attempt at whimsy.

Failing to document why a texture was rejected undercuts future runs, because when this work gets reused you want a log explaining that the glitter finish didn’t survive ASTM D6262 compression even though the render looked perfect. Without that, every restart begins with “Remember that texture we said no to?” and then we all awkwardly nod.

Far too often teams forget supplier limits—the AI might recommend a 0.2 mm emboss while the die only handles 0.35 mm, and that mismatch can cost $1,200 in new tooling. We now pair every texture output with a tooling checklist so the next press operator knows what’s allowed. Think of the checklist as a treaty between the AI’s dreams and the die’s reality.

Expert Tips and Cost Considerations for How to Use AI for Packaging Textures

Pairing AI teams with production veterans matters because hidden costs—compute time at $0.45/min, a 3D tactile scanner license at $4,200/year, specialty substrates like 425gsm kraft or soft-touch films—add up while you chase one premium texture. Those hidden fees are the sneaky kind that make finance folks cross their arms until you prove ROI.

To quantify success, compare cost per iteration to the traditional tactile sampling budget; a single AI-assisted launch often trims $2,800 in physical mock-up spend, and the table below shows how those savings stack against manual workflows. I’m the one who mentioned to finance that the AI basically pays for itself with one smart texture—it made their frowns disappear.

| Method | Time to Texture Concept | Direct Cost | Reusability |

|---|---|---|---|

| Traditional tactile sampling | 18 days from brief to signed finish | $5,400 including transporter and die charges | Low—textures often reinvented per SKU |

| AI-assisted texture discovery | 9 days with parallel training and pilot prints | $2,100 for compute, dataset prep, and validator time | High—templates stored with version control |

Develop guardrails by storing texture templates in a version-controlled repository, noting specific production notes like “use 30% UV varnish” or “avoid foil on dark matte surfaces,” which trims creative hours and cost on follow-up campaigns. Yes, I treat that repository like a sacred text and maybe whisper to it before every new launch.

I point teams toward Custom Packaging Products and consultancies that translate tactile briefs into AI-ready prompts, since trying to decode tactile jargon alone can waste computing cycles and leave prints uninspired. I say it with love but also a little frustration—ask anyone who’s fielded a prompt that looks like a ransom note from a texture purist.

During a Shenzhen negotiation, the CFO wanted exact ROI before approving the $1,700 training session; I showed him the prior launch’s $3,100 savings in die changes plus a 22% drop in approval cycles, which sealed the budget. There was a moment when I thought the CFO might cry from the joy of numbers aligning, but then he just nodded and sold the idea to procurement.

Process and Timeline for Using AI in Packaging Textures

Briefing and Dataset Prep

Mapping this work into a process means spacing the briefing (more than a week), dataset prep (2–3 weeks), model tuning (1–2 weeks), and validation (1 week), while paralleling supplier reviews so you don’t stall waiting for approvals tied to custom printed boxes. I literally keep a stopwatch for this sequence because if one phase drags, the whole thing feels like a slow-motion train derailment. Documenting that schedule also makes it easier to explain how to use AI for packaging textures without sounding vague.

The briefing often contains phrases like “textured feel reminiscent of suede,” and our team turns those into numeric descriptors; for instance, we index “suede” as roughly an 18% texture variance on the sensory panel scorecard. I’m the one who keeps yelling “be specific” when the brief reads like poetry, apparently making the GPT of packaging appreciate that push.

Model Tuning and Validation

Milestones need to align with physical approvals, so as the render stage arrives we also hit pre-press sign-off checkpoints, reducing the chance of a surprise delay when Customer Experience wants to feel the texture before launch approval. Surprises are fun for parties; not for packaging launches.

Communicate the flow with a Gantt-style visual that tracks progress from creative brief through AI iteration to supplier handoff, helping procurement and marketing plan new branded packaging assets. I keep the Gantt chart on my desktop like a security blanket—it looks boring, but it’s saved me from multiple frantic emails.

During a Seattle retailer visit, I explained the process while standing near a pallet of prototypes; the buyer appreciated that we had documented trial runs on the retail floor so they could feel the texture, and that narrative kept the launch on track. He joked that the AI should start giving tours because it clearly knows the texture story better than most interns.

How quickly can teams prove how to use AI for packaging textures improves production?

Proving results fast means pairing sensor data, pilot prints, and hard cost tracking; when a Lagos buyer asked for proof, we compared gloss and grain values before launch and linked the AI output to the supplier’s tool log, which showed the new texture fit within a 0.5% adhesive variance. The shared spreadsheet turned abstract discussions into decisions, and the buyer signed off within three days.

Document every iteration so you can point to the exact run that dropped eight days from approval cycles and saved $2,000 in tactile mock-ups; showing that trail keeps skeptics quiet and gives procurement something to reference when they talk about the new texture roadmap.

Next Actions for How to Use AI for Packaging Textures

Start by auditing your texture development process and spotting where this work can slot in without derailing critical approvals—pick a single SKU with a clear tactile brief, low-risk materials, and a timeline that buys two weeks for experimentation. I always suggest this low-risk pilot because it gives you a story to tell when the next tricky brief shows up.

Pilot one packaging template: gather the reference data, run the model, and document every variable so the workflow becomes repeatable. Repeatability is what turns an experiment into an internal capability. If you fail, at least you have a lesson and a dramatic story for the next retro.

Share your findings with stakeholders via a concise dossier that highlights how the texture work boosts quality, speed, and distinction in upcoming runs, including cost comparisons showing savings plus the creative freedom gained. I always add a couple of throwaway jokes in the dossier because numbers are dry—some humor keeps people reading.

Keep an open line with suppliers; when I visited a foil house in Johor they appreciated the AI texture references because it let them calibrate the plate for the chosen grain ahead of time, saving two days of setup. I might have also bribed them with coffee, but that’s standard warranty for goodwill.

Keep this dossier near your Custom Logo Things folder because the practice should become part of your packaging vocabulary, not a one-off disruption; think of it as a secret handshake between your creative team and the press operators—it just makes things smoother when both parties know the riff.

What data should I prioritize when learning how to use AI for packaging textures?

High-resolution imagery with consistent lighting, tactile annotations describing gloss or grain, and press-ready dielines help the AI understand scale, plus supplier feedback on previous textures and historical FPPs and print repeat success metrics. I always tell teams: if you wouldn’t touch it, the AI shouldn’t either. This keeps the dataset honest and trustworthy.

How do I measure ROI after using AI for packaging textures?

Track reductions in physical prototyping, compare total project hours before and after introducing AI decoding, and monitor market-facing KPIs tied to new textures versus legacy processes to quantify the impact. The day I showed procurement a stacked bar chart of saved days is the day I earned the nickname “ROI whisperer.”

Which platforms support texture discovery without extensive coding?

Cloud-based generative design suites tailored to packaging offer drag-and-drop texture libraries, APIs from AI labs can be wrapped in low-code dashboards, and partners like Custom Logo Things can translate tactile briefs into AI-ready prompts. Honestly, I think these low-code wrappers keep teams sane when they just want a texture and not a math degree.

Can small teams implement this process on tight schedules?

Yes—focus on a single SKU, use pre-trained models, keep rapid validation loops with suppliers, and document learnings so your team builds capability without hiring a full-time AI specialist. I’ve seen two-person teams pull this off, armed with sticky notes and a relentless appetite for detail. It proves scale doesn’t need a big headcount.

How should I test production samples after using AI for packaging textures?

Print on the exact substrate and finishing specs, use sensory panels or reps to rate the texture against brand benchmarks, and log deviations so the workflow learns which textures translate cleanly and which need manual tweaks. I keep a “texture diary” for this because nothing beats flipping back through the pages and seeing progress. It’s also a little cathartic to write “texture triumph” after a successful run.

In short, the work blends data, tactile insight, and real-world validation, and folding it into your strategy turns product packaging from a guess into an evidence-based asset; I still get a thrill when a texture that started as digital actually makes it to the shelf, especially after the 12th retail checkpoint signed off with a raised thumb.

Actionable takeaway: document a single texture pilot from start to finish—data, constraints, AI iterations, pilot prints, and sensory feedback—then share that dossier with procurement, suppliers, and creatives so everyone sees exactly how much faster and more consistent texture decisions become.