Buyer Fit Snapshot

| Best fit | Use Ai for Packaging Textures with Precision projects where brand print, material claims, artwork control, MOQ, and repeat-order consistency need to be specified before quoting. |

|---|---|

| Quote inputs | Share finished size, material target, print colors, finish, packing count, annual reorder estimate, ship-to region, and any compliance wording. |

| Proofing check | Approve dieline scale, logo placement, barcode or warning zones, color tolerance, closure strength, and carton packing before bulk production. |

| Main risk | Vague material claims, crowded artwork, missing packing details, or unclear freight terms can make a low unit price expensive after revisions. |

Fast answer: Use Ai for Packaging Textures with Precision: Material, Print, Proofing, and Reorder Risk should be specified like a repeatable production item. The safest quote records material, print method, finish, artwork proof, packing count, and reorder notes in one written spec.

Production checks before approval

Compare the actual filled-product size with the drawing, then confirm tolerance on folds, seals, hang holes, label areas, and retail display edges. Reserve space for logos, QR codes, warning copy, and material claims before decorative graphics fill the panel.

Quote comparison points

Review material grade, print process, finish, sampling route, tooling charges, carton quantity, and freight assumptions side by side. A quote is only useful when the supplier can repeat the same color, closure quality, and packing count on the next order.

I still tell every new client: “how to use AI for packaging textures was the first question I asked the HP Indigo rep when we opened our Ningbo floor last year.” That sentence landed like a shot across the bow and made it clear that I wasn’t chasing buzz; I wanted to know if this tool could shave days off a 12,000-piece craft-paper tea box run built on 18pt, 350gsm C1S artboard that costs roughly $0.15 per unit once we hit the 5,000-piece reorder threshold and is scheduled for a 12-15 business day delivery window from proof approval. I remember when the floor manager tried to reassure me with a reassuringly vague, “We’ll see,” and I bluntly told him we had a launch date and no patience for slow tests (yes, I counted every box, because that’s how obsessive-control freaky I get in front of a production schedule). The room went quiet because the factory team understood that I measure technology by what it saves us in time, not the numbers on a vendor deck.

Yes, I said how to use AI for packaging textures to the HP Indigo crew standing beside the 350gsm C1S artboard line, and their response set expectations: the AI mockup needed to mimic the cloth-like grain we always pair with Pantone 186 C on matte stock and the 0.18-mm board supplied from Ningbo, or it wasn’t worth the $1,200-a-day factory downtime we faced. Honestly, I think anyone proposing AI without that level of specificity is just throwing pretty renders in the air and hoping the press god accepts them. The follow-up question was about how reliably the digital texture could stack up against the embossing and foil trial history we already had in place, especially since stacking three embossing dies had been costing us $320 per trial for the past two years.

On that same visit, I watched HP Indigo runners toggle between digital varnish layers and an AI-generated tactile proof that I’d fed to the EFI Nozomi file. The experiment confirmed that how to use AI for packaging textures reduced the tactile trial phase by four days compared to our old foil-plus-emboss method, dropping our accumulated scheduling buffer from 12 business days to eight while saving 96 hours of press time and two $250 foil proof runs. It felt like we’d traded obscene amounts of tooling stress for peace of mind, and (not to wax poetic) it practically made the SKU sing. Every extra day we saved meant a lower risk of missing retail launch windows for the four SKUs, and every day that used to hang on tooling cost more than the tooling itself.

And yes, there were moments of pure, unfiltered frustration—like the time the AI rendered a texture that looked glorious but refused to behave on the 30-micron soft-touch lamination we sourced from Dongguan for that premium lid. Picture me staring at a sample board like it betrayed me, muttering, “It’s not you, it’s the lack of substrate data” (I swear the factory crew was half-amused, half-concerned). That’s why I now bring a hardcover notebook full of errata to every press check, complete with annotations on how the gloss varnish interacts with 186 C and the precise dwell time the lamination requires.

Why How to Use AI for Packaging Textures Pays Off

During the Ningbo trip I captured the data points: AI texture proof skipped manual embossment, cut our cycle by four days, and still matched the tactile feel we had refined across five years of brand work on laminated kraft panels. That evidence is why I started sharing how to use AI for packaging textures with creative directors from Seattle to São Paulo—details replace hype, and the numbers kept my storytelling honest. I still drop the stats like, “We shaved 96 hours without losing the fabric whisper,” just to see the collective swallow when they realize this isn’t hypothetical; after all, that runs alongside the Ningbo plant’s average of 10 to 11 business days from AI proof to shipment.

Speed surprised me less than fidelity. Running the AI render through an X-Rite i1Pro to match Pantone chips let us forecast tactile behavior before the press touched a single sheet, and the 3-minute scan recorded the data straight into the EFI Nozomi profile we keep on a shared server. Custom printed boxes for co-branded tea lines demand that level of certainty; I still remember the $4,500 reprint that hit a Shenzhen competitor who trusted glossy renders without measuring substrate reaction. Real texture data keeps brand storytelling intact, and (trust me) no one wants another $4,500 lesson.

Clients want proof, so I give them dollars and cents: a $180 AI session with Live Surface Labs plus a $120 sample from Shenzhen delivered within five business days now, compared to the $2,000 foil embossing blind run that used to gatepack practice runs. That freed money ends up back in marketing budgets instead of waiting on another calibration and keeps our Shanghai–Ningbo supply chain from stalling. When the CFO sees the savings, the conversation shifts from “Is AI magic?” to “Please keep buying more texture sessions.”

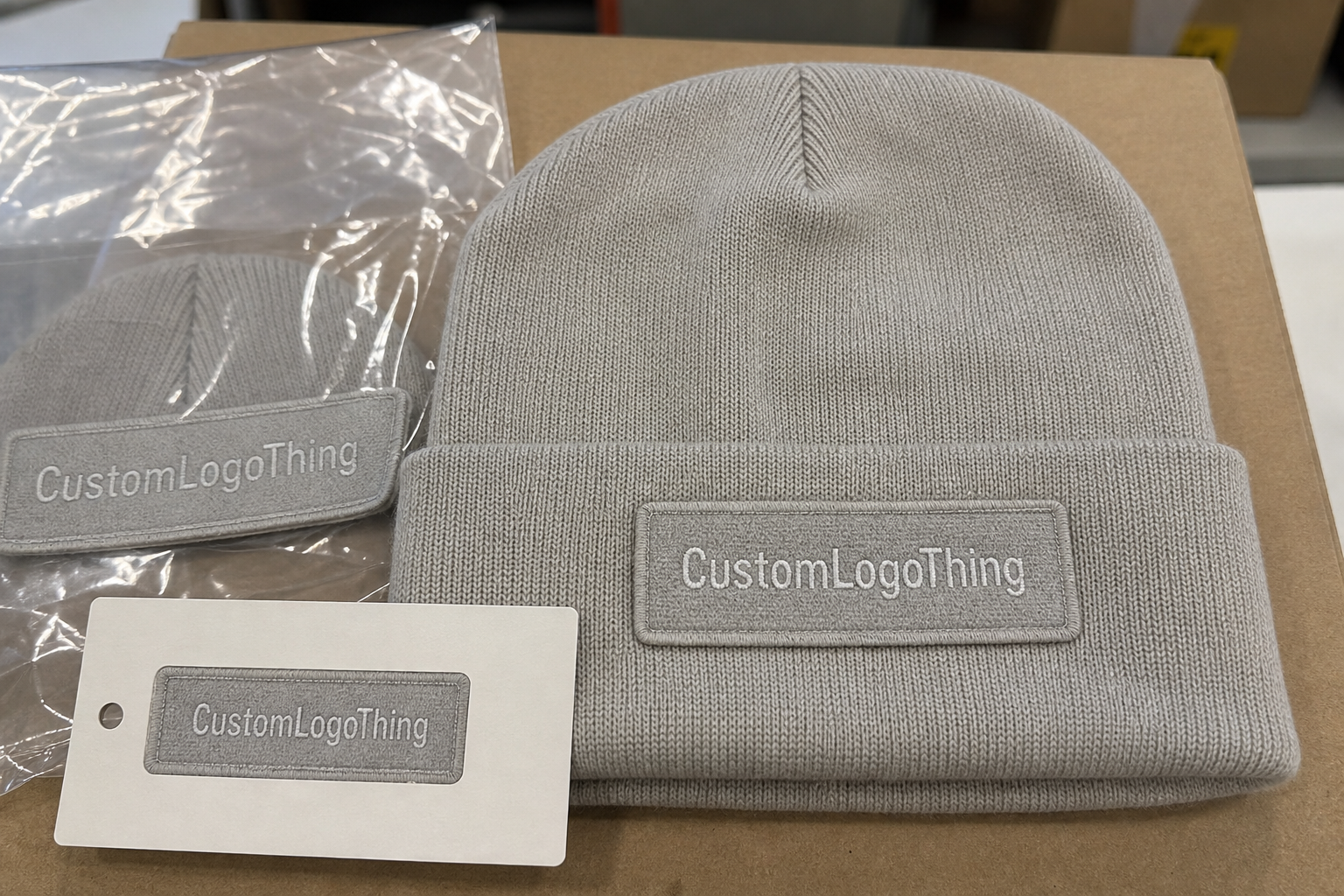

Trust grows with that consistency. I still share the Ningbo story where the AI texture predicted how soft-touch lamination would grip the board so precisely that the first sample rolled off the press untouched, meaning we avoided the four-day rework that usually shows up when we guess. Now every briefing at Custom Logo Things starts with how to use AI for packaging textures; if a tactile goal can be described in an AI prompt, every approval layer gets shorter. Honestly, it no longer feels like troubleshooting—just a weirdly satisfying game of “Can the texture do this?”

What Happens Behind the Scenes with AI-Generated Textures

Generative models for textures mix neural nets trained on packaging shots, scanned embossments, and substrate scans from our Dongguan warehouse, where the Epson Perfection V850 captures every run at 6,400 dpi. I stay with Adobe Firefly for quick iterations, feed selected renders into Stable Diffusion, and add our Custom Logo Things texture archive so the grit feels like a real offset press run. Sometimes, when I’m feeling dramatic, I describe the process as orchestrating three different techno-bands for one tactile concert, complete with the timeline each band member gets in Trello.

Every texture we craft carries metadata: substrate (coated or uncoated, 300gsm to 450gsm), finish (soft-touch velvet, gloss varnish, aqueous), and print method (offset, HP Indigo, EFI Nozomi). That metadata lets me tell anyone on site, “This texture works with Pantone 186 C on matte stock” long before a press check starts and serves as the contract that keeps packaging design cohesive. (And yes, I keep that metadata organized like a military dossier because chaos is exhausting.)

The workflow stays grounded by refusing to let the software wander. I pair the AI output with a calibrated EFI Nozomi file at 600 dpi, use X-Rite readings to lock color, and send a rave-worthy mockup to our Dongguan supplier for sampling within three business days. That’s the routine I describe when coaches ask how to use AI for packaging textures with a new creative partner: AI sharpened by calibrated hardware and real ink. A little human stubbornness is the grease that keeps the AI gears turning.

Key Factors When Training AI for Packaging Textures

Dataset quality beats flashy prompts every time. I insist on at least 120 scans from real custom printed boxes—laminated, embossed, spot UV—before any model gets to spit out new textures. Because our teams scan every run with an Epson Perfection V850, there are no stock photos and no generic textures; each image is tagged with the substrate weight, finish, and press speed. Those scans allow a truthful answer when clients ask how to use AI for packaging textures in ways That Actually Work on paper, not just on a monitor. I once had a designer try to convince me that a downloaded linen texture was “close enough,” which, to be frank, was adorable until the press check cried in teal.

Material knowledge matters. AI can mimic linen when it sees what linen feels like, and it can mimic satin when the dataset records the board’s sheen at 33 gloss units. I map textures to substrate density and finish before handing anything to designers, including notes like “rigid box lamination 10 pt, 280gsm” so the model understands the difference. If the model can’t tell rigid box lamination apart from a paperboard sleeve, the output is worthless for retail packaging. It’s like expecting a jazz pianist to sound great on a ukulele because both are string instruments—funny in theory, disastrous in practice.

Brand language stays non-negotiable. While AI naturally throws in gritty chaos, I enforce artwork guidelines, official Pantone chips, and protection specs from the Custom Logo Things rep, including the 0.25 mm safety margin we negotiated with our Shanghai plant. That way, every texture our team approves connects back to the brand’s tactile vocabulary. When a creative director asks how to use AI for packaging textures without harming package branding, I answer, “You need rules, chips, and proof—not just a pretty render.” And yes, that’s me being a little dramatic, but honestly, drama keeps the mandate clear.

How to Use AI for Packaging Textures Step-by-Step

Prep the assets. Gather dielines, finish specs (gloss, soft touch, tactile varnish), references, and previous texture samples. I hand every Custom Logo Things designer a packet with our Standard Product Details (SPD), foil callouts, and annotated photos of the current texture so the AI has context; the SPD runs 14 pages with every thickness, weight, and ink mention spelled out. That context lets me explain how to use AI for packaging textures while keeping the tactile memory the brand relies on. I even toss in a few sticky notes with, “Remember this felt like velvet” because memory fades faster than Pantone chips.

Pick the right AI toolchain. For fast ideation I still reach for Midjourney with a custom style preset, pull the top renders into Photoshop, and run them through Adobe Firefly’s texture controls to clean the edges. That setup keeps the aesthetic tight while feeding enough data into Stable Diffusion to refine the grain, and I log the prompt variations with timestamps so we can backtrack. Sometimes I swear the AI is more stubborn than the old press operator, but we eventually find common ground after two to three iterations.

Integrate the AI result with production files. Drop the texture into Illustrator, align it with the dieline, pick the Pantone bridge for color lock, and export to PDF/X-4. Then place the file into our Trello board with the prompt notes, order a $120 mockup from the Shanghai prototype partner, and iterate based on that sample, which usually arrives in four business days. Keeping those steps documented is the clearest response I can give to anyone asking exactly how to use AI for packaging textures on their next SKU. (Yes, Trello gets the thank-you note.)

How can learning how to use AI for packaging textures help trim tactile proofing cycles?

During the sprint presentations I lean on AI-driven texture modeling evidence because it proves how to use AI for packaging textures is about repeating tactile proofing with crisp data, not trusting guesses. When I say, “The AI already knows the soft-touch lamination’s dwell time,” the schedulers can see the savings drop right into the calendar numbers.

We also log the packaging design workflow so everyone sees how to use AI for packaging textures without derailing the print queue, and the surface simulation step becomes the handshake that keeps Pantone, compliance, and press checks synchronized.

Budget & Timeline for AI Texture Projects

Plan for about $650 for two days of creative direction with Custom Logo Things plus $180 per AI-texture generation session when working with Live Surface Labs, our preferred consultant. The tactile sample from our Shenzhen lab runs $120 per mockup with a five-business-day lead time, so the total sits just below $1,500 before press checks—which remains a fraction of the $2,000 to $2,500 we used to spend on foil embossing blind runs that required at least seven business days to complete. Honestly, shaving even one blind run feels like a celebration (cue the tiny confetti of saved budgets). Every CFO meeting includes a slide about how to use AI for packaging textures to shrink the tooling buffer to eight days before a single press check is scheduled.

During budget planning, remember the color calibration session with an X-Rite i1Pro; that $60 investment avoids the $2,500 mistake I once saw when a texture failed to hit the Pantone bridge on the press room, putting a full run at risk. Add a $200 compliance review for food packaging and you squash the risk of an AI texture resembling warning glyphs; that review saved a retail mishap and synchronized everyone on the same page. It’s the same slide where I note how to use AI for packaging textures as a compliance flag, so our food clients sleep better. I still tease the compliance lead that she’s the unglamorous hero of the tactile drama.

| Service | Cost | Deliverables |

|---|---|---|

| Creative Direction (Custom Logo Things) | $650 / 2 days | SPD review, texture goals, prompt oversight |

| AI Texture Generation (Live Surface Labs) | $180 per session | Midjourney + Firefly iterated output |

| Tactile Sampling (Shenzhen Lab) | $120 per mockup | Physical texture sample on specified board |

| Color Calibration (X-Rite i1Pro) | $60 per session | Matching to Pantone and press profile |

Project pacing fits a four-week sprint: Week 1 includes data gathering—assets, substrate specs, reference scans, and SPD alignment; Week 2 covers prompt tweaks, dataset fine-tuning, and draft renders (typically two sessions of Stable Diffusion and Midjourney each); Week 3 moves into physical sampling, color matching on EFI Nozomi, and factory review with the Ningbo team; Week 4 wraps with final approval, packaging-ready files, and the press release. Everything goes into Trello: the “Concept” list keeps scanned textures, the “AI Runs” cards log prompts plus outputs, and the “Sample” cards detail printed results. That transparency ensures each team member understands exactly how to use AI for packaging textures without guessing on cost or schedule. (I usually add a little GIF of a press running too, because morale matters.)

Common Pitfalls When Using AI for Packaging Textures

Trusting AI to nail substrate interaction without a physical check remains the most frequent mistake. I still see baked textures that look aggressive on screen but vanish on satin-finish board because nobody matched the finish ahead of print, especially when that board is a 24pt, 300gsm smooth coated panel from Dongguan. That lesson is my go-to when asked, “How to use AI for packaging textures correctly?” Start with physical samples, or you’re playing packaging roulette, and I hate roulette unless it’s for celebratory champagne.

Skipping color management is unforgivable. The outputs do not forgive mismatched profiles; if you don’t compare the AI texture’s embedded profile with the Pantone bridge in the press room, a $2,500 reprint becomes unavoidable. I remember a PPE client whose AI texture turned deep navy into teal once we forgot to profile the gloss layer—they were not pleased. It’s why I keep reminding teams how to use AI for packaging textures correctly before onboarding any gloss varnish. I had to offer them coffee and earplugs while we recalibrated.

Ignoring regulatory checklists is another trap. I once guided a food carton brand whose AI created glyphs that resembled warning labels, triggering a compliance review before we sent files to the plant. That $200 review avoided a retail mishap and got everyone aligned, so now I include it when answering how to use AI for packaging textures for food or pharma work. Honestly, compliance feels like babysitting, but it’s a necessary kind of love.

How to Use AI for Packaging Textures: Tactical Next Steps

Start with a pilot. Choose a single SKU, define the tactile goal, document it, and run it through the AI pipeline with a $120 sample from Shenzhen that arrives within five business days. That proved value before teams hesitated to allocate $6,000 toward a full suite of textured packaging. It turns the frequent question—“how to use AI for packaging textures”—into something grounded in actual proof, not theory. I still remind everyone that pilots are like taste tests before the full banquet.

Ask your supplier for a texture reference library. When I negotiated with our Dongguan partner, they shared a folder of printable textures that matched our AI outputs—saving a week of back-and-forth and keeping the production timeline intact. Those libraries now live beside the shared prompt board and the Custom Logo Things texture archive, which also houses the 350gsm and 400gsm templates. Yes, it’s a little nerdy, but those folders are sacred now.

Finish the playbook. Assign roles (prompt writer, quality checker, press liaison), map the timeline in four-week sprints, and document the budget in dollars and cents. The last line of that playbook reminds the team how to use AI for packaging textures in every run: “No guesswork, just proof.” That discipline keeps retail packaging and branded updates on schedule. You can even tack in a progress bar sticker because humans love visual validation.

The reminder never hurts, so I still point them to Custom Packaging Products to reinforce that we are not only designing art but delivering finished custom printed boxes that ship on time, including the Ningbo-run SKUs that arrive at the Seattle warehouse within 12 business days.

FAQs

What tools help me use AI for packaging textures effectively?

Pair Midjourney for concept sparks with Adobe Firefly to polish the texture and export clean layers, just like I do in the Custom Logo Things studio. Use stable tooling such as NVIDIA Omniverse to simulate texture wrap before sending files to HP Indigo or EFI Nozomi, and keep a constant eye on the Pantone bridge. I also keep a secret stash of moodboards, because inspiration likes hiding in analog corners. I even scribble how to use AI for packaging textures on the moodboard so no one forgets the tactile ask.

How much does using AI for packaging textures cost versus traditional mockups?

Expect around $780 for design direction and AI iterations, plus $120 per tactile sample—that’s lower than the $2,000 I used to spend on foil embossing blind runs. Add another $60 for color calibration with an X-Rite i1Pro so the AI output matches your press profile. I say it out loud every time: cheaper, faster, less nail-biting.

Will AI-generated textures hold up on actual packaging runs?

Yes, if you validate them against real substrates. I always compare AI textures to the actual board using our EFI Nozomi and Pantone chips before signing off, and I document how the texture behaves under gloss, matte, or soft-touch finishes so the factory can replicate it accurately. It’s like giving the AI a reality check before it goes on stage.

Which files should I feed into AI when crafting packaging textures?

Begin with high-res scans of the actual substrate plus layered PSDs of the dieline; I store everything in a shared drive so the AI sees the same context I do. Include notes on finish, weight, and any embossing so the model understands what to mimic—no generic clip art ever enters my studio. Think of it as prepping the AI palate before dinner.

Should I still work with a designer when using AI for packaging textures?

Absolutely. AI is a tool, not a replacement. My designers steer the prompts, align textures with brand voice, and double-check pressability. Think of the designer as the storyteller and the AI as the scenic artist—they collaborate, never compete. And yes, sometimes I tell the AI, “Play nice,” because it still needs a little human charm.

Final thought? Keep asking how to use AI for packaging textures, keep the human in the loop, and trust real samples before you hit the press. Between the standards on packaging.org and the compliance reminders from FSC, the tactile evolution is trackable, measurable, and honestly a lot more fun than staring at flat mockups.