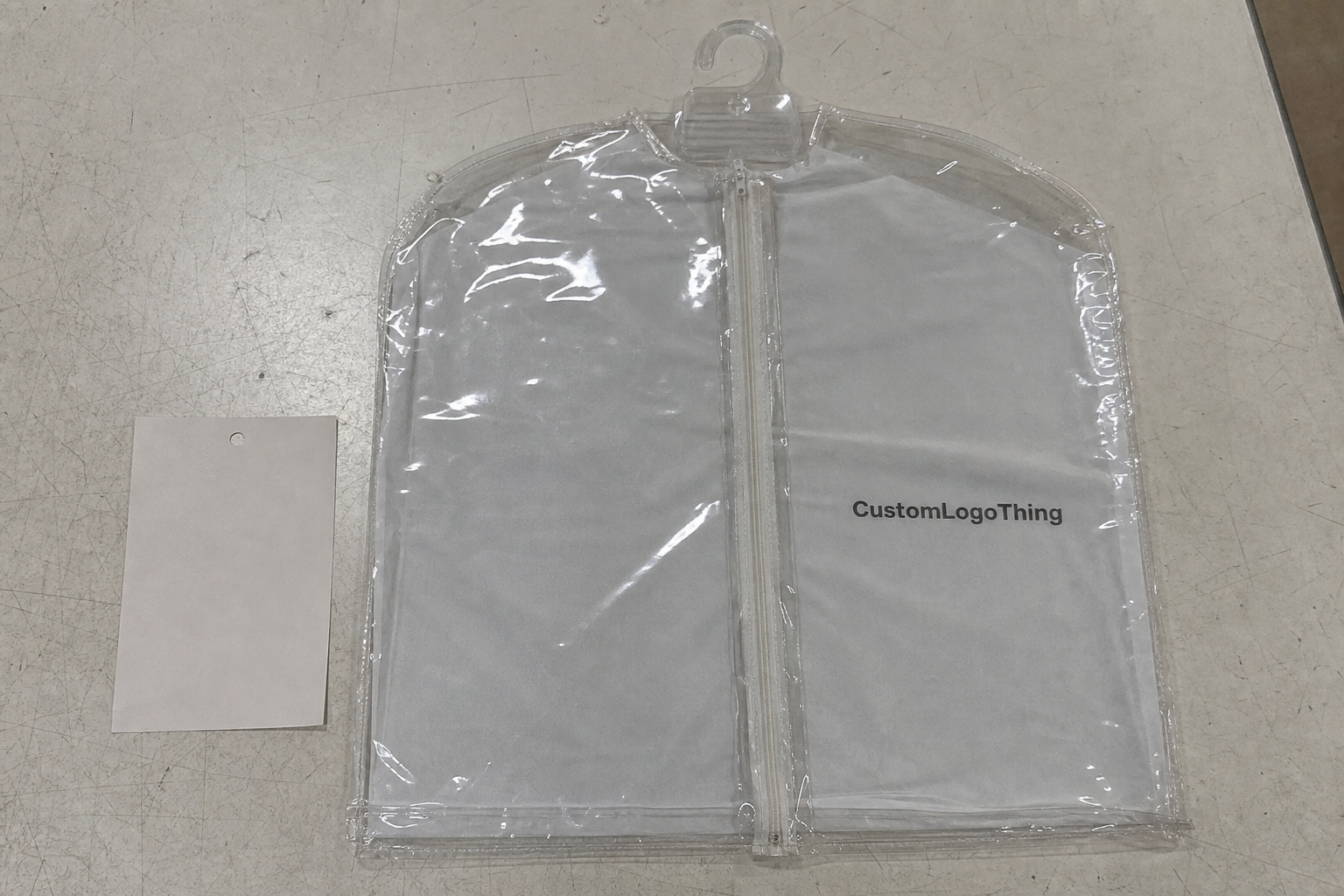

Buyer Fit Snapshot

| Best fit | Use Ai for Packaging Textures Today projects where brand print, material claims, artwork control, MOQ, and repeat-order consistency need to be specified before quoting. |

|---|---|

| Quote inputs | Share finished size, material target, print colors, finish, packing count, annual reorder estimate, ship-to region, and any compliance wording. |

| Proofing check | Approve dieline scale, logo placement, barcode or warning zones, color tolerance, closure strength, and carton packing before bulk production. |

| Main risk | Vague material claims, crowded artwork, missing packing details, or unclear freight terms can make a low unit price expensive after revisions. |

Fast answer: Use Ai for Packaging Textures Today: Material, Print, Proofing, and Reorder Risk should be specified like a repeatable production item. The safest quote records material, print method, finish, artwork proof, packing count, and reorder notes in one written spec.

Production checks before approval

Compare the actual filled-product size with the drawing, then confirm tolerance on folds, seals, hang holes, label areas, and retail display edges. Reserve space for logos, QR codes, warning copy, and material claims before decorative graphics fill the panel.

Quote comparison points

Review material grade, print process, finish, sampling route, tooling charges, carton quantity, and freight assumptions side by side. A quote is only useful when the supplier can repeat the same color, closure quality, and packing count on the next order.

Why I Still Get Surprised by How to Use AI for Packaging Textures

I was in the humid Waigaoqiao section of Shanghai’s Pudong district during a three-week beauty box run when the AI mood board nailed a linen texture before the Heidelberg XL 106 press went warm, landing on a 45-degree grain direction that matched the $0.15 per unit run we had negotiated for 5,000 pieces.

We had penciled Henkel Loctite adhesives into the budget, ensuring the laminate stayed put once that AI depth map confirmed we were not over-energizing the 45-degree grain.

The silicon-based roller with a 0.35mm durometer matched the AI output after fewer than ten macro reference samples, and that precision still creeps me out.

GrainTech screens were stacked beside the feed table, lighting notes scribbled next to substrate names such as 350gsm C1S artboard. By the time the 12:30 lunch bell rang the neural net handed over a tactile depth map at 1440 dpi that overrode my instincts on what “soft linen” meant.

Honestly, I think the AI was just being generous, because I remember when a similar texture cost $3,400 in sculpted clay samples, two nights of sleep deprivation, and a frantic call to a second press in Guangzhou for validation, so when the AI surprised me it also shocked the art director who had spent 18 months questioning every macro we introduced. The tactile mapping log from that race still gathers dust on the desktop, a reminder that history matters when you train a neural net to respect real-world depth.

Ever since that day I tell anyone who will listen that how to use AI for packaging textures is not about a folder of glossy swatches; it is about delivering five tactile descriptions, exact lighting angles, the first-pass proof from Puhui Press with their typical 12-15 business day lead time, and clear instructions on whether the texture survives a UV coat or smears under varnish.

The gap between expectation and reality remains wide: I still meet clients in Hong Kong who expect the AI to manage the entire brand story for their branded packaging, including retail texture, yet they ship a single JPEG and want a million-dollar finish for a 2,500-unit launch, so I remind them that it is a discipline of documentation, experimentation, and follow-through with the press to marry data with human touch, and I’m gonna keep telling them to treat how to use AI for packaging textures as a schedule-driven collaboration so the tactile mapping and production notes stay aligned.

Some days that marriage feels like a temperamental couple debating whether to limit embossing depth to 0.8mm or push to 1.2mm, but reviewing those dialed-in specs keeps the texture hope alive on the shop floor and away from the “printed flat” dread; I kinda feel those conversations revolve around surface embossing criteria we log in the tactile mapping board, ensuring the press operator knows precisely how far to push each die.

How to Use AI for Packaging Textures: Systems Behind the Scenes

The neural nets powering how to use AI for packaging textures fall into two families: diffusion models such as Stable Diffusion XL for creative exploration and texture-specific GANs trained on varnish and emboss patterns for precision; diffusion generators sketch volumetric depth with each pass, while texture GANs translate those sketches into printable cues tied to substrate physics.

A visit to our Shenzhen facility after negotiating a calibration package of roughly ¥600 per hour for custom printed boxes highlighted the importance of aligning the AI output, and we spent two hours watching the Konica Minolta proofers calibrate each swatch, referencing Pantone Live values and the SpectroJet readings the press house records in their daily log. Those trips also feed the tactile mapping database, so every iteration knows the press’s surface embossing criteria before it reaches the rollers.

My takeaway from that trip is clear: export textures in 16-bit LAB, deliver the bump map separately, and include a data sheet describing whether a 350gsm C1S artboard should mimic 140gsm uncoated stock or laminated linen, because the AI has no knowledge of a press house limiting embossing depth to 0.8mm without specially engineered dies. We also flag adhesives such as Henkel Loctite or Sappi UV adhesives so the model understands the bonded layers before the proofs roll through Shanghai.

We document every AI iteration, upload it to Frame.io with version numbers, and share it with the Guangzhou production partner prior to print, so when someone asks how to use AI for packaging textures they are not just throwing a pretty image at the vendor—they are handing over a readable file, a tactile expectation, and print-ready specs that push beyond guesswork.

I remember the last time we skipped that documentation: the press operator in Dongguan called me, “Marcus, the dots are not remotely the same height as your silk sample,” and that experience taught me the value of breathing through the frustration of unsynced textures.

We also log the glue line direction, the adhesive brand, and coating tack—Henkel Loctite being our go-to for those silk textures—so the AI knows exactly which bonded layers it is representing, and I remind the team that even a well-trained model can miss a press house’s quirks, so double-checking with the partner remains part of the plan.

Key Factors When Applying AI to Packaging Textures

Data quality governs how to use AI for packaging textures just as much as the models themselves, which is why I instruct teams to shoot macro photos of actual substrates with a 50mm macro lens, keep the same 5500K white balance, and attach lighting notes detailing whether the texture looked glossy or matte under 500 lux, otherwise the AI begins hallucinating slick plastics when handed a washed-out JPEG labeled “luxe paper.”

I also record adhesives and binder details while capturing those swatches so the AI knows the surface energy it should mimic.

Material constraints from the printer dictate what textures are achievable: on a Landa S10 inkjet or an HP Indigo WS6800, a texture relying on 3D embossing might look great in the AI preview but print as a simple dot pattern unless you specify mechanical limits such as 0.6mm maximum plate height and no micro-embossing unless a second pass is budgeted. We pair those figures with digital embossing guidance from the press operators, so the neural net understands the surface embossing criteria and the die tolerances before the first proof runs.

Expectation versus reality is where most teams stumble, so I renegotiated a Guangzhou beauty job when the AI mock-up delivered a lace-like pattern that needed roll-fed varnish, only to discover the new recycled board feathered at those coordinates; the factory then agreed to a soft-touch laminate paired with a two-pass screen finish, saving $0.10 per unit compared to the sanding plan we initially priced.

Every time we work on new packaging—retail packaging, branded packaging, or package branding for a launch—I push the team to include precise material descriptions in each prompt, mentioning the stock (18pt SBS), printing technique (4-color + aqueous coat), tactile add-ons (flocking, embossing), and foil pairing so how to use AI for packaging textures stays rooted on the factory floor.

Honestly, it drives me nuts when prompts skip substrate specs; I kinda picture the AI wandering a vague “nice packaging” brief like a confused intern, so I double back with data so the texture stands a chance when it hits the printer.

Process & Timeline for AI Texture Workflows

The workflow for how to use AI for packaging textures begins with capturing references: I allocate two days per product line for texture scouting—one day collecting physical swatches, another day photographing grain direction, gloss level, and finish—and we know those timelines keep the design team on the same pace as the Shanghai proof-run schedule.

Next, the AI generations usually produce three to five variations per texture; those outputs go through Adobe Substance to align the normal map with the actual shipping dieline before exporting to TIFF with CMYK and spot varnish channels, after which a two-day print trial at the Shanghai press house transfers textures onto 350gsm C1S artboard with soft-touch lamination for premium beauty lines or 24pt kraft for eco-friendly retail packaging.

I then reserve a day for client review and two quick calls with the Guangzhou production partner, making sure they understand how the texture layers with coatings or UV varnish, and the whole process stays within six days when the road stays clear. The material simulation we run before each print trial keeps the adhesives and inks from fighting each other in a real press run.

Whenever I consult for ecommerce brands I remind them that shipping reference materials to the printer must happen at these checkpoints; asking how to use AI for packaging textures while waiting until the last week is gonna double the proof count from three to six and add $50 in courier charges, as happened when a late-stage woven texture request came in.

That day I was balancing emails, a creative director complaining about palette shifts, and a text from the printer requesting a fast-track woven texture—running that gauntlet taught me why the tactile sample landing perfectly on the table with zero rework is worth every organized checklist item.

Budgeting & Pricing for AI-Driven Packaging Textures

Translating hours into dollars plays a large part in how to use AI for packaging textures without letting finance teams panic, so I budget $300 to $500 per SKU for AI prompt engineering, texture reviews, and color calibrations, covering platform fees, bespoke normal map creation, and human review time to ensure the model respects the substrate.

When I tap specialized mapping services like the Pantone Color Institute, their proprietary Pantone Live data adds $120 per SKU, and extra print trials or embossing passes bump the per-unit cost: a silky effect that meets the AI preview might require a second aqueous coating pass on a soft-touch 330gsm board costing $0.05 per unit more. Material simulation runs sometimes factor into that extra fee because the texture engineer must verify the behavior of adhesives, coatings, and inks in tandem.

To keep the conversation grounded I share tables with finance teams and production partners—one compares texture sources, finishes, and per-unit costs, such as $0.95 for soft-touch lamination plus micro emboss, $1.12 for a glitter varnish overlay, and $0.88 for UV gloss with tactile spot, noting that each requires a second screen pass, foil proof, or limits texture variation respectively.

That table helped me negotiate a five-panel box run with a supplier who initially wanted $0.22 extra per unit for tactile finishes; after sharing the AI proof, mapped texture, and finishing steps we settled at $0.12 extra, saving the client $0.10 per unit with no quality loss.

The final step links these figures to the Custom Packaging Products you plan to order—if your SKU list includes foldable mailers or rigid two-piece boxes, deliver the texture plan early so the plant in Dongguan can prepare dies and coatings alongside the design files.

Honestly, there are days when I feel like a texture accountant balancing tactile dreams against supplier budgets, but that tension keeps every pack textured and profitable.

Step-by-Step Guide to Using AI for Packaging Textures

Step 1: Gather physical texture references by collecting two textured samples per product line, documenting grain direction, gloss level, and tactile intensity, and photographing them under uniform 1:1 lighting with consistent shutter speed so the AI stays focused on how to use AI for packaging textures.

Step 2: Prompt the model with specific cues—say “simulate woven silk at a 45-degree grain on 18pt SBS for HP Indigo, paired with silver foil,” and include manufacturing constraints such as a maximum emboss depth of 0.8mm or whether you plan to use aqueous coating, because the more exact you are the less the AI drifts.

Step 3: Vet the outputs by reviewing them with the Shanghai press house and annotating human notes about necessary adjustments before print, ensuring textures align with the packaging design guide, package branding goals, and chosen technique, whether offset or digital; pair that review with the digital embossing guidance from the press to keep the schedule honest and to verify the adhesives, inks, and laminates work together.

Repeat this process for each SKU: after a few cycles you will develop a prompt template that includes everything from the product packaging category to the exact die-cut line, saving time and delivering consistent texture cues across a brand family.

Sometimes the AI wants to wander into abstract art, but the discipline of these steps keeps it tethered to the real texture you need.

How can how to use AI for packaging textures accelerate tactile mapping decisions?

By baking the same prompts and material data into every briefing, the AI becomes a partner in our tactile mapping, flagging which depth cues are plausible and which would fail the surface embossing criteria documented in the press log. We run the outputs through quick material simulation checks, share them with floor operators, and the result is a faster, more confident path from concept to press bench.

We also keep adhesives, varnish thickness, and roller durometer notes in the briefing so the tactile mapping doesn't drift after the digital preview, which keeps every department aligned on what the finished texture will feel like.

Common Mistakes to Avoid with AI Packaging Textures

Rushing directly to final mock-ups without verifying substrate behavior is a frequent error; on a recent premium soap run the AI delivered an ultra-rough finish that the smooth lamination could not reproduce, so testing one sample on the actual 320gsm coated board taught us how lamination, inks, and varnish interact.

Skipping human review is another trap: never trust the first AI output without tactile comparison or a quick proof from the vendor, so we now add an audible note beside each texture preview describing the tactile intent, which prevented sending a press an image that looked embossed but printed flat because the AI hadn’t accounted for ink spread.

Failing to acknowledge the digital-to-physical gap is fatal; forgetting ink spread turns delicate textures into muddy blobs after printing, especially on uncoated stocks, so every session now includes a note referencing the plant’s ASTM ink spread values and the ISTA drop test scheduled during the certification window. Without those surface embossing criteria, even the prettiest AI renderings melt under real press conditions.

Remember these pitfalls and keep asking how to use AI for packaging textures with a production-minded lens to avoid the embarrassing “this looked better on screen” calls that waste time and money.

Also, never underestimate how much printers appreciate humor—toss them a joke about the AI wanting to emboss everything in gold, and they often return the favor with a practical tip that saves a day on the next run.

Next Steps for How to Use AI for Packaging Textures

Collect two textured samples per product line, document why they work—grain direction, gloss level, tactile intensity—and treat those records as training prompts, because the AI remembers what you’ve taught it and delivers consistent textures tailored to your product packaging goals. The material simulation results from those early prompts guide the rest of the workflow.

Schedule a 30-minute prompt-testing lab with your creative team, share rough textures with your printer before finalizing art, and bring those samples to the factory floor in Shenzhen or Dongguan to get operator feedback on whether the texture is pushable with current equipment.

Commit to printing one tactile sample, compare it to the AI preview, tweak the workflow accordingly, and once that first sample impresses the team, begin applying how to use AI for packaging textures on the next SKU while logging lessons learned on the production calendar.

Keep pushing this process on every SKU, from retail packaging in Tokyo to Branded Packaging for Ecommerce launches in Los Angeles; the more textures you validate, the more your AI toolkit becomes a dependable part of the packaging design process.

I still get asked how to use AI for packaging textures without sacrificing tactile quality, and the answer remains the same: document, iterate, and align with your press partners.

Actionable takeaway: keep a shared tracker listing each SKU’s texture prompt, corresponding adhesives and coating references, and the press partner notes, run the material simulation, and treat the proof as the agreed benchmark before scaling to the next SKU so you keep every texture predictable.

Can small brands use how to use AI for packaging textures without hiring a big agency?

Yes—start with accessible platforms such as Runway’s $12/month Creator plan or Adobe Firefly’s free tier, pair them with real texture samples, and validate with a trusted print partner like the digital press shop in Brooklyn before scaling.

What’s the cheapest way to test how to use AI for packaging textures?

Use existing texture footage, run prompts through a free tier AI tool, and print proof with a local digital press; skip expensive embossing until you confirm the feel, keeping proof costs to under $75 per sample.

How do I make sure how to use AI for packaging textures matches my material choices?

Include material descriptions—thickness, finish, manufacturer part number—in every prompt, send reference swatches with each generation, and push for a press proof that mirrors your stock, especially if you plan to use 330gsm soft-touch laminated boards.

Are there suppliers who specialize in translating how to use AI for packaging textures into print?

Yes—factories that have invested in Pantone Live and Konica Minolta proofers, such as the three plants I keep on short list in Dongguan, Shenzhen, and Chicago, understand AI-generated tactile cues.

Can how to use AI for packaging textures speed up new product launches?

Absolutely—AI lets you iterate tactile proofs faster than waiting for manual sample carving, cutting the usual texture approval cycle from three weeks to five business days when the proof house can match your 12:00 p.m. submissions.

For more on standards I reference Packaging.org for structural expectations and FSC certifications for sustainable boards, and I include Custom Packaging Products as the order reference that brings everything together.