Guide to KPI Tracking Packaging Returns for Shipping and Reverse Logistics

I still remember a dock in Columbus, Ohio, where one mislabeled return pallet sat against a wall for 11 days while three departments argued over who owned the count. By the time anyone reconciled the freight, overtime, and shrink, the costs had split into three different ledgers, and the story no longer matched the floor. I was standing there thinking, with a kind of exhausted disbelief, that the pallet was less of a problem than the reporting. That was the moment the guide to KPI tracking packaging returns became obvious to me: start where the box hits the floor, not where the spreadsheet ends.

Wet receiving lanes, handwritten exception notes, and totes counted as 24 units on Monday and 21 on Wednesday are the usual suspects. Those small fractures are where the numbers begin to drift, and they drift fast. A guide to KPI tracking packaging returns has to read like an operating manual because reverse logistics lives in mud, tape, Zebra scanners, and missed handoffs long before it lives in a dashboard. I have seen a clipboard become the unofficial system of record in a 180,000-square-foot facility, which is both funny and deeply annoying. Also, kinda expensive.

Shipping, logistics, finance, and sustainability teams all need the same version of events. A guide to KPI tracking packaging returns should help them stop arguing about whether the problem sits in freight, packaging design, or receiving discipline, and start working from the same counts, the same timestamps, and the same cost logic. In one three-site network in the Midwest, that alignment saved 8 to 12 hours a week of reconciliation work, mostly by cutting duplicate counting and late manual corrections after 4 p.m. That is not magic. It is just fewer broken handoffs.

Packaging returns are simple to define once the jargon is stripped away: reusable or disposable packaging moving back through the supply chain, from customer sites, plants, or distribution centers to inspection, reuse, repair, recycling, or disposal. In a corrugated plant near Grand Rapids, that might be a flattened gaylord built from 275# test board. In a consumer goods network around Monterrey, it might be a 48 x 40 plastic pallet, a molded pulp insert, or a branded tray made from 350gsm C1S artboard that needs cleaning before it can re-enter circulation. The label changes. The physics do not.

Outbound metrics rarely expose the real issue on their own. On-time shipping, cube utilization, and damage-at-delivery still matter, but they do not show the hidden cost sitting inside reverse logistics: extra dock minutes, slow cycle times, broken return rules, and packaging that looks good on paper but fails in actual handling. Finance feels the cost first. Operations owns the mess. Customers notice when credits arrive 5 days late, and they notice loudly, especially when a $48 credit takes three weekly follow-ups to release. A guide to KPI tracking packaging returns keeps those costs from hiding inside "miscellaneous" lines and polite explanations.

Shared metrics change the conversation. I have sat in meetings where operations blamed the carrier, finance blamed the plant, and sustainability blamed material choice. Once the same return lane, the same SKU, and the same disposition code were on the screen, the discussion shifted from opinion to action. Honestly, that is the practical value of a guide to KPI tracking packaging returns: one count stream, one root-cause path, fewer surprises, fewer late-night email chains that make everyone grumpy, and fewer arguments about whose version of the return loop is closest to reality.

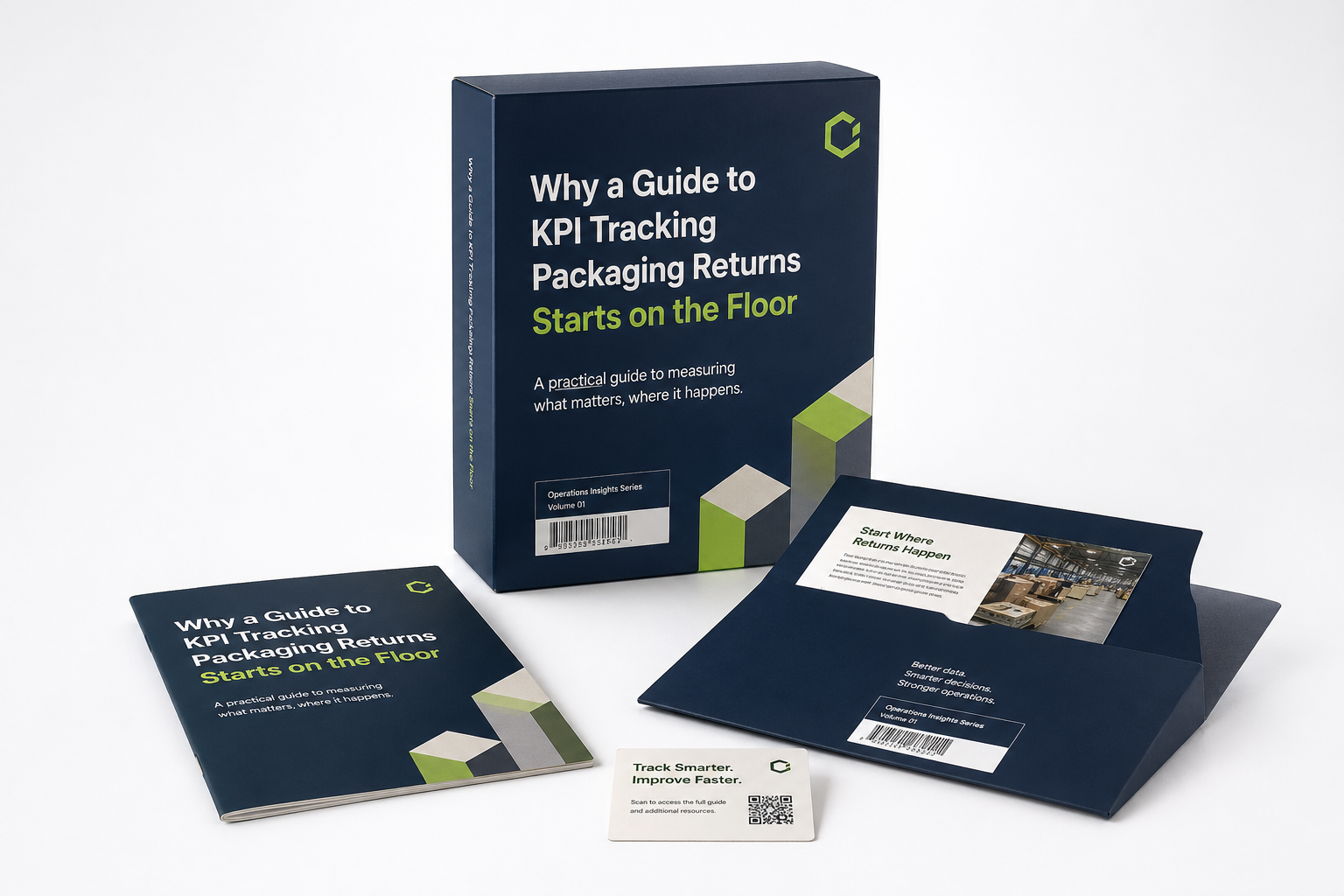

Why a Guide to KPI Tracking Packaging Returns Starts on the Floor

The floor is where the story begins. Any guide to KPI tracking packaging returns that ignores that reality will produce tidy reports and bad decisions. I watched a warehouse supervisor in a Midwest distribution center mark 14 pallets as reusable because they looked clean from 6 feet away. Nine of them had split stringers and missing corner blocks by the time the repair team pulled them back to the yard. The spreadsheet said one thing. The forklift operator and repair bench said something far more useful.

That gap matters because return KPIs are not abstract accounting figures. They measure how packaging behaves after first use, how people handle it during reverse logistics, and whether the packaging design actually supports reuse. A guide to KPI tracking packaging returns has to begin with receiving, count verification, and condition grading, since those steps decide whether the data can survive scrutiny on a Tuesday morning audit or a quarter-end finance review. If the counts are wrong at the dock, every later report becomes a polished version of a mistake.

A clean definition works best on audits: packaging returns are reusable or disposable shipping materials moving backward through the chain for inspection, reuse, repair, recycling, or disposal. The category can include corrugated outer cartons, pallets, slip sheets, dunnage, stretch wrap, tote bins, or Custom Printed Boxes that need to be recovered, verified, and either reused or scrapped. The mechanics differ. The KPI logic does not, whether the return starts in Atlanta, Dallas, or Shenzhen. A guide to KPI tracking packaging returns should keep that definition close, because the numbers drift quickly when teams use different labels for the same object.

"We thought we had a freight problem until the dock counts showed our return pallets were being mixed with outbound empties," a plant manager told me during a supplier review. "Once we separated the lanes, the numbers stopped lying."

That lesson repeats across industries. A guide to KPI tracking packaging returns is not about polishing the dashboard; it is about making the return process visible enough that freight, labor, shrink, and service issues show up before they become month-end noise. The strongest operations I have seen run a 15-minute floor review each morning, compare the prior day’s receipts with physical counts, and settle discrepancies before lunch. It sounds almost too basic to matter. It matters a lot, especially when 220 pallets hit the dock before 9:00 a.m. and the returnable packaging loop is already under pressure.

- Floor-first metrics: count accuracy, condition grade, and time to receipt.

- Return-flow metrics: cycle time, reuse rate, and loss rate.

- Business metrics: cost per return, chargeback exposure, and recovery value.

That structure keeps the guide to KPI tracking packaging returns practical. Operations sees dock activity, finance sees the cost stack, and sustainability sees the recycled or reused material rate. If all three groups are looking at the same pallet or tote ID, they can make the same decision instead of debating whose report is newest. I have seen that single shift save more time than three software demos and a dozen "alignment" meetings, which is a polite term for everyone arguing in slightly nicer language over a $0.62 handling variance.

How KPI Tracking Works in Daily Operations

A guide to KPI tracking packaging returns works best when the reverse flow is mapped step by step and every step has a timestamp. In the plants I have supported, the sequence usually runs like this: return authorization, pickup or inbound receipt, count verification, condition grading, sorting, disposition, cleaning or repair, and final financial closeout. Skip one of those steps and the process may still move, but the numbers go soft, like a pallet wrap job done in a 22-degree freezer bay with a dull cutter. The return loop keeps moving, but the signal gets noisy.

The data sources are usually already there, just scattered. WMS receipts show what arrived. TMS history shows what moved. ERP cost data shows what it cost. RMA forms show why it came back. Dock notes show what the system missed. A guide to KPI tracking packaging returns should pull those feeds into one ID structure so a tote, pallet, or carton can be traced from pickup all the way to repair, recycle, or disposal. That traceability is the difference between guessing and knowing, and it is worth more than one extra report tab. It also makes reusable packaging easier to manage because every trip has a record.

Leading and lagging indicators solve different problems, so both belong in the same view. Leading indicators like exception count, missed pickup rate, or delayed receipt entry warn you before losses stack up. Lagging indicators like reuse rate, damage rate, and cost per return tell you what actually happened. A guide to KPI tracking packaging returns that ignores either side will only explain the damage after the check has cleared, which is a bad moment to discover the math.

At a corrugated converter in Grand Rapids, I watched a team discover that 18% of returned palletized packaging was being classified under the wrong customer code. One lane looked healthy. Another looked awful. Once they split the lane by facility and carrier, the cycle time issue jumped out: one site was holding returns for 4.8 days before scan-in, while another was closing them in 19 hours. That is the kind of detail a guide to KPI tracking packaging returns should surface, because averages are incredibly persuasive and often completely misleading.

For teams that want a simple structure, I usually start with six numbers: total volume returned, reusable percentage, average cycle time, cost per return, damage rate, and recovery rate by material type or packaging family. If you are handling branded packaging or retail packaging with delicate finishes, add a seventh metric for cosmetic rejection. A scuffed print panel can be just as expensive as a cracked tote when presentation matters, especially on a carton printed on 350gsm C1S artboard with matte varnish and foil accents. I have had buyers roll their eyes at that point, and then quietly admit the customer was right. A guide to KPI tracking packaging returns gets stronger when it makes aesthetics measurable instead of arguing about them after the fact.

When I validate handling assumptions, I still point people to the test libraries at ISTA. Not every return stream needs a lab, but every serious guide to KPI tracking packaging returns needs a standard language for drop risk, vibration, stacking, and distribution stress. That language connects the dock with the design table, which is where a lot of "we thought it would hold up" arguments begin, usually after somebody orders 5,000 units at $0.15 per unit and then discovers the corner crush in week two.

Guide to KPI Tracking Packaging Returns: Cost and Pricing Signals

Cost is where a guide to KPI tracking packaging returns gets real for finance, because every returned pallet or carton carries a full stack of expenses. Freight is only the first line. Add dock labor, inspection time, cleaning, repair, repackaging, storage, disposal, and any customer credit or chargeback tied to the return, and the total often lands at 2 to 4 times the freight bill alone. That gap is why a clean-looking return can still be a financial nuisance. It also explains why reverse logistics is often where margins quietly disappear.

My cost-per-return method is blunt on purpose: take the variable costs that change with each unit, then add a fair share of fixed costs like software, yard space, or dedicated return labor. If a return tote costs $0.18 to move and inspect on a 5,000-piece run, but $0.44 when the volume falls to 1,200, the average hides the pressure point. A guide to KPI tracking packaging returns should never flatten that difference away. Average cost can be a comforting liar.

Packaging design changes the economics faster than most buyers expect. A sturdier 32 ECT corrugated shipper may cost more than a lighter board, but if it reduces tearing by 7% and cuts repack labor by 14 minutes per case group, the return loop can improve quickly. The same logic applies to molded pulp, thermoformed trays, and reusable plastic dunnage. The cheapest line item is often the one that fails on the second trip, and then the "cheap" choice gets expensive in a hurry. A guide to KPI tracking packaging returns should show that trade-off in dollars, not in slogans.

I learned that lesson during a supplier negotiation in Shenzhen, where a vendor quoted $0.41 per insert for a returnable tray being compared against a $0.26 disposable option. On paper, the disposable unit looked better. On the floor, the reusable tray cut damage claims enough to recover its premium in 9 months, and the buyer’s service team stopped fighting the same three complaints every quarter. I remember thinking how often purchasing teams get punished for the wrong comparison. That is the kind of decision a guide to KPI tracking packaging returns should support, because the return loop changes the math.

Cost visibility matters even more when deposit systems, vendor-managed return loops, or customer-owned packaging are involved. If the return rules are vague, a profitable loop can quietly turn into a cost sink because no one owns the loss rate, the storage aging, or the cleaning expense. I have seen a lane that looked efficient at $0.72 per return jump to $1.94 once the team counted the idle inventory sitting in a side yard for 16 days. That kind of delay hides like a raccoon in a warehouse corner: oddly persistent and impossible to ignore once you spot it.

For teams thinking about redesign, I often point them to Custom Packaging Products when the issue is structural rather than procedural. If the current return stream keeps failing because the carton crushes, the tray slips, or the graphics are unreadable after one cycle, a packaging change may beat another round of training. That is where the guide to KPI tracking packaging returns intersects with packaging design and package branding, especially when the spec sheet calls for 14 pt SBS, 350gsm C1S artboard, or a folded mailer that needs to survive three handoffs and a 600-mile truck route.

| Tracking Method | Typical Monthly Cost | Setup Time | Best Fit | Main Weak Spot |

|---|---|---|---|---|

| Spreadsheet with manual counts | $0 to $200 | 1 to 3 days | One lane, one site, fewer than 300 returns a month | Human error and stale entries |

| WMS or ERP report pack | $500 to $3,000 | 1 to 4 weeks | Multi-site teams with consistent scan discipline | Hard to capture condition grades and exception notes |

| Dedicated returns dashboard | $2,500 to $10,000 | 3 to 8 weeks | Networks with reusable assets, chargebacks, or repair loops | Needs clean master data and regular ownership review |

The table is not there to sell software. It shows that a guide to KPI tracking packaging returns should match the tool to the operational complexity. A small distribution center with 2 return lanes does not need the same system as a national network handling pallets, totes, and Custom Printed Boxes across 14 customer sites. I would honestly worry more about teams that try to buy a big platform before they can count cleanly, because the software will not fix a missing scan at 3:15 p.m.

If your sustainability team is part of the discussion, the EPA’s recycling guidance at EPA recycling resources is a useful reference for contamination, sorting, and disposal logic. That matters because a guide to KPI tracking packaging returns is not only about cost recovery; it is also about choosing the correct end-of-life path for each material stream. A pallet that should be repaired but gets scrapped is more than waste; it is a missed economic decision and, in one Illinois network I saw, a $1.12 loss per unit.

One more thing most teams miss: segment the cost by lane, customer, and packaging family. A 90-mile regional return may be profitable at $0.63 per unit, while a cross-border move can sink to $2.80 once customs handling, waiting time, and repack inspection are included. Without segmentation, the guide to KPI tracking packaging returns turns into an average that helps nobody. I have yet to meet a plant manager who gets excited about a number that only exists because all the bad routes were blended with the good ones.

Process and Timeline in Packaging Returns KPI Tracking

Timeline is the quiet killer in reverse logistics, and a guide to KPI tracking packaging returns needs to measure it as carefully as cost. A return can be cheap and still be a problem if it sits for 6 days before inspection, because the delay eats reuse opportunities, occupies rack space, and postpones credits or replacement shipments. I have seen a 48 x 40 tote loop lose half its economic value simply because the inbound appointment window kept slipping by one shift. Time does not care that everyone was busy.

The easiest way to map the timeline is to define target time windows for each stage. For example: receipt entry within 30 minutes of unloading, count verification within 4 hours, condition grading within 1 business day, disposition within 2 business days, and financial closeout within 5 business days. Those numbers are not universal, but a guide to KPI tracking packaging returns should force the team to choose targets instead of pretending every cycle is identical. Vague timing goals are just wishful thinking wearing a badge.

Delays usually cluster in the same three places: receiving, inspection, and disposition approval. Receiving delays come from missing paperwork or mixed pallets. Inspection delays come from not enough labor or not enough space. Disposition delays come from unclear ownership, especially when finance, procurement, and operations all think the other group should sign off first. That is why a guide to KPI tracking packaging returns should include a bottleneck view, not just a date stamp. If the timeline does not reveal the choke point, the process is still hiding something.

I like tracking median time and 90th percentile time together because averages can lie. If the median cycle time is 1.8 days but the 90th percentile is 7.6 days, a small set of trouble returns is probably trapping space or labor. In one Indiana DC, that pattern uncovered a weekly backlog of 41 mixed totes that had been waiting for the same supervisor sign-off for nearly 2 weeks. Nobody was eager to own that little disaster, which usually means the metric is telling the truth.

Different packaging types deserve different timing rules. Pallets may move through a yard in 24 to 72 hours. Totes with barcode labels might clear in a day. Specialty containers, insulated shippers, and high-visibility retail packaging may require inspection and cosmetic approval, which can stretch the process to 4 or 5 days. A guide to KPI tracking packaging returns should not force one time standard across all of them, because the physical handling is not the same and pretending otherwise only creates arguments.

When the process is stable, the dashboard should answer one question every morning: where did the return spend the most time yesterday? If the answer is receiving, you need labor. If it is inspection, you need queue management. If it is disposition, you need a decision rule. That is how a guide to KPI tracking packaging returns turns timing data into operational action, instead of another pretty chart that gets ignored after the meeting ends, usually after a 28-minute discussion about colors nobody can remember.

How Do You Build a Guide to KPI Tracking Packaging Returns Dashboard?

The best dashboard starts with one business question, not 14 charts. A guide to KPI tracking packaging returns should begin with something specific, like "Which packaging family is costing us the most per return?" or "Which lane has the slowest turnback time?" Once the question is clear, the metric set becomes much easier to limit and defend, especially when the CFO wants one number and the warehouse wants six.

My default pilot set has six core numbers: return volume, reuse rate, damage rate, cost per return, average cycle time, and exception count. If the network uses reusable assets, I add recovery rate and loss rate. If the network sells retail packaging or branded packaging with a premium finish, I also add cosmetic reject rate, because a scuffed print panel can create customer dissatisfaction even when the structure is technically intact. Packaging has a funny way of being judged on both survival and appearance, and a guide to KPI tracking packaging returns has to reflect both.

Ownership is the part most companies underbuild. Every KPI needs a named owner for data accuracy, review cadence, and follow-up action. Without that, the guide to KPI tracking packaging returns becomes a report with no consequence. The receiving supervisor may own count accuracy, the transportation lead may own pickup timing, and the finance analyst may own cost normalization, but each metric still needs one person who closes the loop. Otherwise, the dashboard becomes a very expensive wall decoration.

I prefer a dashboard in layers. The first layer is raw data capture: scan, count, date, condition, lane, and disposition. The second layer is a cleaned operational view that removes duplicates and flags missing fields. The third layer is a trend view with weekly and monthly movement. The fourth layer is exception alerts for spikes, misses, and outlier lanes. That layered structure makes the guide to KPI tracking packaging returns usable by both a dock lead and a regional manager, which is the whole point, whether the network has 1 site or 12.

If you want a fast pilot, start with one return lane and one facility. Verify 100% of the data against physical counts for 2 weeks, then compare the system record to the actual yard or rack position. Only after those numbers align should you expand. I have seen teams rush into a 12-site dashboard and spend the next month cleaning master data instead of improving return performance. It is a classic case of solving the report before solving the operation, and it usually ends with a lot of coffee and a lot of rework.

A practical note: build one chart that shows cycle time by stage, one chart that shows cost per return by lane, and one alert panel for exception counts. That is enough to reveal most of the pain in a small network. Once the team trusts the numbers, you can expand into more detailed views for customer, packaging SKU, and carrier performance. Trust comes first. Fancy comes later, ideally after the first 30 days show the returns are dropping from 1,400 to 1,100 units.

- Start small: 1 facility, 1 return lane, 5 to 7 KPIs.

- Validate physically: match counts against dock receipts for 2 weeks.

- Separate views: operations, finance, and leadership need different levels of detail.

- Automate alerts: cycle time spikes, reuse drops, and damage jumps should trigger action.

When design changes are needed, I often review Custom Packaging Products alongside the dashboard, because the data may be telling you the current carton, insert, or label stock is the wrong tool for the return loop. A guide to KPI tracking packaging returns becomes much stronger when process data and packaging design are reviewed together, not one after the other. That pairing saves a lot of circular debate, especially when the alternative is another meeting with a 17-slide deck and no decision.

Common Mistakes in KPI Tracking Packaging Returns

The biggest mistake is trying to measure everything. A guide to KPI tracking packaging returns should resist the temptation to add 40 fields, because bloated dashboards hide the two numbers that actually explain the loss. I have watched teams build reports with 18 tabs and still miss a 9% damage spike on a single lane. That is not analysis; that is digital clutter.

Inconsistent reason codes cause a different kind of failure. If one site marks a damaged tote as "customer misuse," another as "inbound damage," and a third as "unknown," root-cause analysis is broken before it starts. A guide to KPI tracking packaging returns only works when reason codes are controlled, limited, and trained. I usually cap the list at 8 to 12 codes, then push all free-text notes into one review field. People love free-text because it feels flexible. Finance hates it for exactly the same reason.

Labor is another blind spot. A return that looks cheap in freight terms may still be expensive once 22 minutes of dock time, 8 minutes of inspection, and 6 minutes of rework are included. That is one reason I push for cost per return rather than freight per return. The guide to KPI tracking packaging returns should expose the full operational burden, not just the carrier invoice. A box is not cheap if it takes three people and a forklift to make it useful again.

Stale master data causes quiet damage. SKU codes change. Customer sites get renamed. Lane IDs drift as networks grow. If the dashboard still points to the old data map, the reporting layer starts lying in small, hard-to-notice ways. I have seen one outdated plant code make a 3-month trend look stable when the actual loss rate had climbed from 4.1% to 6.8%. That is the sort of mistake that makes everyone stare at the screen in silence for a long 30 seconds.

Copying one KPI definition across every facility without checking the local process creates another problem. One plant might inspect packaging at the dock, another might move it to a repair cell, and a third might count returns by pallet rather than by unit. A guide to KPI tracking packaging returns should respect those differences or the comparisons will feel unfair and the team will stop trusting the report. Trust, once lost, is expensive to rebuild, especially after a missed month-end close in Chicago or Charlotte.

"If the team argues over the definitions for 20 minutes, the metric is probably too broad," one operations director told me. "We cut ours from 16 KPIs to 6, and suddenly the conversation got useful."

That lesson arrives late for a lot of groups. Simplicity is not laziness. It is the discipline to keep the guide to KPI tracking packaging returns focused on the few measurements that change behavior inside the warehouse, on the truck, and at the finance desk. If a metric does not change a decision, it is just decorative math. The strongest systems are usually the ones with the fewest excuses and the clearest ownership.

Expert Tips and Next Steps for a Guide to KPI Tracking Packaging Returns

The best improvement work starts with exceptions, not averages. A guide to KPI tracking packaging returns should focus the team on outliers, repeat offenders, and lanes that drift outside the normal band. If 92% of your returns are fine and 8% are causing 80% of the pain, the dashboard should make that imbalance obvious by lane, customer, and packaging family. That distribution shows up everywhere once you start looking for it, from a 12-mile local loop to a 700-mile interstate lane.

Segmentation matters more than most people expect. Break the numbers out by SKU, packaging family, customer, plant, carrier, and lane, then compare reuse rate, cost, and cycle time across those slices. In one client meeting, that cut exposed a single customer site where reusable totes were being held an extra 3 days for housekeeping review, which pushed the whole network’s return cycle off schedule. That is the kind of insight a guide to KPI tracking packaging returns should produce, and it is exactly the sort of operational surprise that makes people groan when they see it.

Floor reviews still matter, even with good software. I like a 20-minute weekly huddle with a warehouse lead, a customer service rep, and someone from finance. The people touching the boxes usually know why a pallet came back wet, why the barcode is unreadable, or why the carrier missed the pickup window by 14 hours. A guide to KPI tracking packaging returns becomes much more credible when the dock team can confirm the numbers in real time. If the dock folks roll their eyes at the dashboard, you have work to do.

Automated alerts are worth setting up once the core data is clean. Trigger a note if cycle time rises more than 20%, reuse rate drops below target, damaged packaging exceeds a set threshold, or one lane suddenly doubles its exception count. That kind of alert prevents a 2-day issue from turning into a 2-week backlog. I have seen that alone save a packaging team from a $6,000 month-end surprise, and frankly nobody misses surprise costs.

My practical 30-day rollout looks like this: choose one return stream, define five KPIs, clean the data fields, verify counts by hand, review the first trend line, and then decide whether the problem is process, packaging design, or customer behavior. If the issue is packaging design, bring in a redesign conversation early. If the issue is packaging fit or print durability, compare the current carton or insert against Custom Packaging Products and test a tighter spec before the next run, ideally with a sample lot of 500 pieces rather than a full 10,000-unit order. A guide to KPI tracking packaging returns works best when the next step is concrete.

For teams that handle product packaging and branded packaging as part of the same operation, it helps to think about the return loop during the design stage, not after the complaints start. A stronger closure flap, a better label placement, or a cleaner return instruction panel can shave 1 to 2 days off the cycle and reduce the number of mis-sorted units. That is why a guide to KPI tracking packaging returns should sit beside packaging design decisions, not behind them. I wish more teams learned that before the first wave of damage claims, but, well, warehouses teach patience whether you ask for it or not.

My advice is simple: do not wait for perfect data. Start with a small, honest system, then improve one lane at a time. A guide to KPI tracking packaging returns is most valuable when it helps people see where the money is leaking, where the time is disappearing, and where the packaging itself needs to change. If you can close that loop in 30 days, you will already be ahead of most networks I have visited. And if the first month feels messy, that is normal. The floor is messy before the numbers get honest.

FAQ

How do I start a guide to KPI tracking packaging returns if we only have spreadsheets?

Begin with one return lane or one facility so the spreadsheet stays manageable and the team can validate counts by hand. Track only a few metrics at first: total returns, reusable percentage, cycle time, cost per return, and damage rate. Standardize reason codes and date fields before adding charts, because clean input matters more than fancy reporting in the early phase of a guide to KPI tracking packaging returns. I would rather see a plain spreadsheet that people trust than a polished dashboard nobody believes, especially if the spreadsheet is updated daily at 4:30 p.m.

What KPIs matter most for packaging returns in shipping and logistics?

The most useful starting set is return volume, reuse rate, damage rate, average cycle time, and cost per return. If you manage reusable assets, add recovery rate and loss rate so you can see whether packaging is actually coming back into circulation. If service issues are common, add on-time return receipt and exception rate to catch bottlenecks earlier. That mix gives you both the physical and financial picture, and it works whether the lane runs through Atlanta, Toronto, or Phoenix. A guide to KPI tracking packaging returns should always include both operational and financial signals.

How often should packaging returns KPIs be reviewed?

Review daily or weekly at the facility level when the process is new or unstable, so problems do not sit until month-end. Use a monthly trend review for management, since cost and recovery patterns become clearer over a longer window. Keep the review cadence tied to action: if nobody can respond to the metric, the frequency should be simplified. A KPI that only gets glanced at is basically wall art, and nobody needs another framed chart in a break room. In a mature guide to KPI tracking packaging returns, the cadence should match the speed of the decisions.

How do I calculate the true cost of a packaging return?

Add freight, labor, inspection, cleaning, repair, storage, disposal, and any chargebacks or customer credits tied to the return. Separate fixed costs from variable costs so you can see what changes when return volume rises or falls. Compare cost per return by lane or packaging type to identify which flows are profitable and which need redesign. That is the heart of a guide to KPI tracking packaging returns, even if the math makes everyone a little uncomfortable and the final number comes out to $1.36 instead of $0.84.

What are the fastest improvements after KPI tracking packaging returns is set up?

Fix data quality first by tightening reason codes, return dates, and packaging identifiers, because bad data hides the real issue. Then attack the biggest bottleneck, usually receiving, inspection, or disposition, where cycle time and labor waste are easiest to reduce. Finally, use the dashboard to renegotiate packaging design or return rules where the cost per return is consistently too high, because a guide to KPI tracking packaging returns only pays off when the numbers lead to action. The win is not the dashboard itself. It is the behavior change that follows.